JBoss.orgCommunity Documentation

A clustered, transactional cache

Release 3.0.0 Naga

Copyright © 2004, 2005, 2006, 2007, 2008 JBoss, a division of Red Hat Inc., and all authors as named.

October 2008

- Preface

- I. Introduction to JBoss Cache

- 1. Overview

- 2. User API

- 3. Configuration

- 4. Batching API

- 5. Deploying JBoss Cache

- 6. Version Compatibility and Interoperability

- II. JBoss Cache Architecture

- 7. Architecture

- 8. Cache Modes and Clustering

- 9. Cache Loaders

- 10. Eviction

- 11. Transactions and Concurrency

- III. JBoss Cache Configuration References

This is the official JBoss Cache Users' Guide. Along with its accompanying documents (an FAQ, a tutorial and a whole set of documents on POJO Cache), this is freely available on the JBoss Cache documentation website.

When used, JBoss Cache refers to JBoss Cache Core, a tree-structured, clustered, transactional cache. POJO Cache, also a part of the JBoss Cache distribution, is documented separately. (POJO Cache is a cache that deals with Plain Old Java Objects, complete with object relationships, with the ability to cluster such POJOs while maintaining their relationships. Please see the POJO Cache documentation for more information about this.)

This book is targeted at developers wishing to use JBoss Cache as either a standalone in-memory cache, a distributed or replicated cache, a clustering library, or an in-memory database. It is targeted at application developers who wish to use JBoss Cache in their code base, as well as "OEM" developers who wish to build on and extend JBoss Cache features. As such, this book is split into two major sections - one detailing the "User" API and the other going much deeper into specialist topics and the JBoss Cache architecture.

In general, a good knowledge of the Java programming language along with a strong appreciation and understanding of transactions and concurrent programming is necessary. No prior knowledge of JBoss Application Server is expected or required.

For further discussion, use the user forum available on the JBoss Cache website. We also provide a mechanism for tracking bug reports and feature requests on the JBoss Cache JIRA issue tracker.

If you are interested in the development of JBoss Cache or in translating this documentation into other languages, we'd love to hear from you. Please post a message on the JBoss Cache user forum or contact us by using the JBoss Cache developer mailing list.

This book is specifically targeted at the JBoss Cache release of the same version number. It may not apply to older or newer releases of JBoss Cache. It is important that you use the documentation appropriate to the version of JBoss Cache you intend to use.

I always appreciate feedback, suggestions and corrections, and these should be directed to the developer mailing list rather than direct emails to any of the authors. We hope you find this book useful, and wish you happy reading!

Manik Surtani, October 2008

This section covers what developers would need to quickly start using JBoss Cache in their projects. It covers an overview of the concepts and API, configuration and deployment information.

Table of Contents

- 1. Overview

- 2. User API

- 3. Configuration

- 4. Batching API

- 5. Deploying JBoss Cache

- 6. Version Compatibility and Interoperability

JBoss Cache is a tree-structured, clustered, transactional cache. It can be used in a standalone, non-clustered environment, to cache frequently accessed data in memory thereby removing data retrieval or calculation bottlenecks while providing "enterprise" features such as JTA compatibility, eviction and persistence.

JBoss Cache is also a clustered cache, and can be used in a cluster to replicate state providing a high degree of failover. A variety of replication modes are supported, including invalidation and buddy replication, and network communications can either be synchronous or asynchronous.

When used in a clustered mode, the cache is an effective mechanism of building high availability, fault tolerance and even load balancing into custom applications and frameworks. For example, the JBoss Application Server and Red Hat's Enterprise Application Platform make extensive use of JBoss Cache to cluster services such as HTTP and EJB sessions, as well as providing a distributed entity cache for JPA.

JBoss Cache can - and often is - used outside of JBoss AS, in other Java EE environments such as Spring, Tomcat, Glassfish, BEA WebLogic, IBM WebSphere, and even in standalone Java programs thanks to its minimal dependency set.

POJO Cache is an extension of the core JBoss Cache API. POJO Cache offers additional functionality such as:

- maintaining object references even after replication or persistence.

- fine grained replication, where only modified object fields are replicated.

- "API-less" clustering model where POJOs are simply annotated as being clustered.

POJO Cache has a complete and separate set of documentation, including a Users' Guide, FAQ and tutorial all available on the JBoss Cache documentation website. As such, POJO Cache will not be discussed further in this book.

JBoss Cache offers a simple and straightforward API, where data - simple Java objects - can be placed in the cache. Based on configuration options selected, this data may be one or all of:

- cached in-memory for efficient, thread-safe retrieval.

- replicated to some or all cache instances in a cluster.

- persisted to disk and/or a remote, in-memory cache cluster ("far-cache").

- garbage collected from memory when memory runs low, and passivated to disk so state isn't lost.

In addition, JBoss Cache offers a rich set of enterprise-class features:

- being able to participate in JTA transactions (works with most Java EE compliant transaction managers).

- attach to JMX consoles and provide runtime statistics on the state of the cache.

- allow client code to attach listeners and receive notifications on cache events.

- allow grouping of cache operations into batches, for efficient replication

The cache is organized as a tree, with a single root. Each node in the tree essentially contains a map,

which acts as a store for key/value pairs. The only requirement placed on objects that are cached is that

they implement java.io.Serializable.

JBoss Cache can be either local or replicated. Local caches exist only within the scope of the JVM in which they are created, whereas replicated caches propagate any changes to some or all other caches in the same cluster. A cluster may span different hosts on a network or just different JVMs on a single host.

When a change is made to an object in the cache and that change is done in the context of a transaction, the replication of changes is deferred until the transaction completes successfully. All modifications are kept in a list associated with the transaction of the caller. When the transaction commits, changes are replicated. Otherwise, on a rollback, we simply undo the changes locally and discard the modification list, resulting in zero network traffic and overhead. For example, if a caller makes 100 modifications and then rolls back the transaction, nothing is replicated, resulting in no network traffic.

If a caller has no transaction or batch associated with it, modifications are replicated immediately. E.g. in the example used earlier, 100 messages would be broadcast for each modification. In this sense, running without a batch or transaction can be thought of as analogous as running with auto-commit switched on in JDBC terminology, where each operation is committed automatically and immediately.

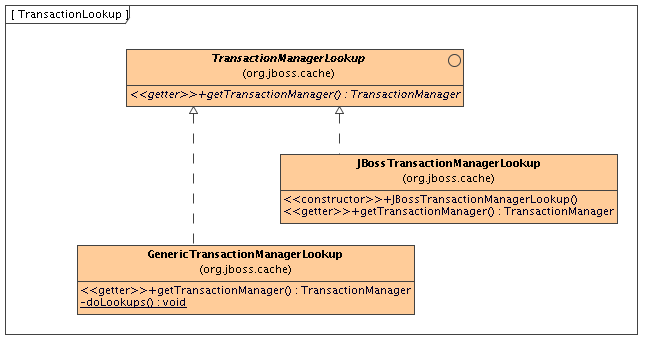

JBoss Cache works out of the box with most popular transaction managers, and even provides an API where custom transaction manager lookups can be written.

All of the above holds true for batches as well, which has similar behavior.

The cache is completely thread-safe. It employs multi-versioned concurrency control (MVCC) to ensure thread safety between readers and writers, while maintaining a high degree of concurrency. The specific MVCC implementation used in JBoss Cache allows for reader threads to be completely free of locks and synchronized blocks, ensuring a very high degree of performance for read-heavy applications. It also uses custom, highly performant lock implementations that employ modern compare-and-swap techniques for writer threads, which are tuned to multi-core CPU architectures.

Multi-versioned concurrency control (MVCC) is the default locking scheme since JBoss Cache 3.x. Optimistic and pessimistic locking schemes from older versions of JBoss Cache are still available but are deprecated in favor of MVCC, and will be removed in future releases. Use of these deprecated locking schemes are strongly discouraged.

The JBoss Cache MVCC implementation only supports READ_COMMITTED and REPEATABLE_READ isolation levels, corresponding to their database equivalents. See the section on transactions and concurrency for details on MVCC.

JBoss Cache requires a Java 5.0 (or newer) compatible virtual machine and set of libraries, and is developed and tested on Sun's JDK 5.0 and JDK 6.

There is a way to build JBoss Cache as a Java 1.4.x compatible binary using JBossRetro to retroweave the Java 5.0 binaries. However, Red Hat Inc. does not offer professional support around the retroweaved binary at this time and the Java 1.4.x compatible binary is not in the binary distribution. See this wiki page for details on building the retroweaved binary for yourself.

In addition to Java 5.0, at a minimum, JBoss Cache has dependencies on JGroups, and Apache's commons-logging. JBoss Cache ships with all dependent libraries necessary to run out of the box, as well as several optional jars for optional features.

JBoss Cache is an open source project, using the business and OEM-friendly OSI-approved LGPL license. Commercial development support, production support and training for JBoss Cache is available through JBoss, a division of Red Hat Inc.

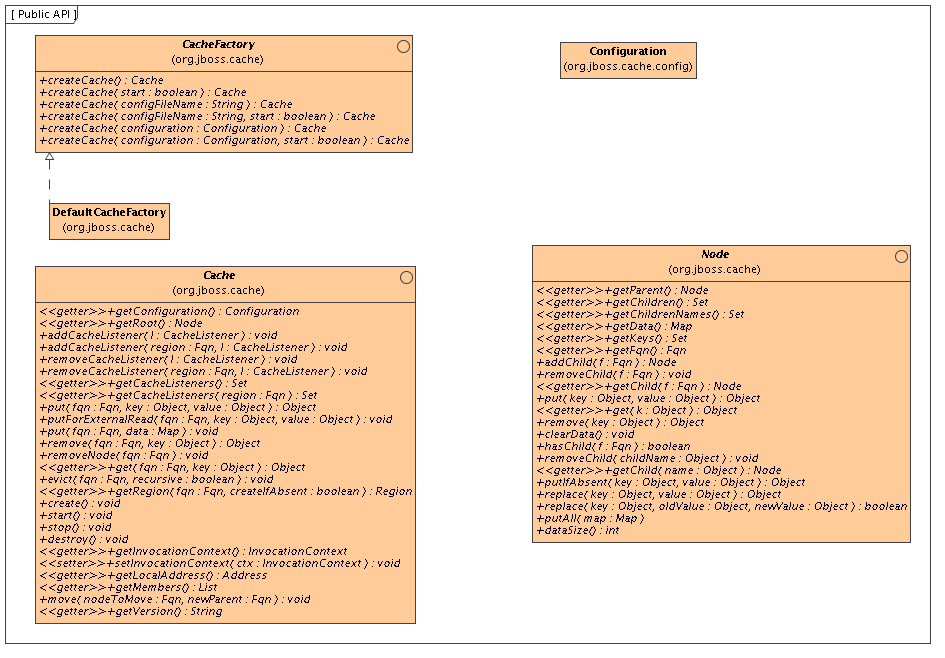

The Cache interface is the primary mechanism for interacting with JBoss Cache. It is

constructed and optionally started using the CacheFactory. The CacheFactory

allows you to create a Cache either from a Configuration object or an XML

file. The cache organizes data into a tree structure, made up of nodes. Once you have a reference to a

Cache, you can use it to look up Node objects in the tree structure,

and store data in the tree.

Note that the diagram above only depicts some of the more popular API methods. Reviewing the javadoc for the above interfaces is the best way to learn the API. Below, we cover some of the main points.

An instance of the Cache interface can only be created via a CacheFactory.

This is unlike JBoss Cache 1.x, where an instance of the old TreeCache class could be directly

instantiated.

The CacheFactory provides a number of overloaded methods for creating a Cache,

but they all fundamentally do the same thing:

-

Gain access to a

Configuration, either by having one passed in as a method parameter or by parsing XML content and constructing one. The XML content can come from a provided input stream, from a classpath or filesystem location. See the chapter on Configuration for more on obtaining aConfiguration. -

Instantiate the

Cacheand provide it with a reference to theConfiguration. -

Optionally invoke the cache's

create()andstart()methods.

Here is an example of the simplest mechanism for creating and starting a cache, using the default configuration values:

CacheFactory factory = new DefaultCacheFactory();

Cache cache = factory.createCache();

In this example, we tell the CacheFactory to find and parse a configuration file on

the classpath:

CacheFactory factory = new DefaultCacheFactory();

Cache cache = factory.createCache("cache-configuration.xml");

In this example, we configure the cache from a file, but want to programatically change a configuration element. So, we tell the factory not to start the cache, and instead do it ourselves:

CacheFactory factory = new DefaultCacheFactory();

Cache cache = factory.createCache("/opt/configurations/cache-configuration.xml", false);

Configuration config = cache.getConfiguration();

config.setClusterName(this.getClusterName());

// Have to create and start cache before using it

cache.create();

cache.start();

Next, lets use the Cache API to access a Node in the cache and then

do some simple reads and writes to that node.

// Let's get a hold of the root node.

Node rootNode = cache.getRoot();

// Remember, JBoss Cache stores data in a tree structure.

// All nodes in the tree structure are identified by Fqn objects.

Fqn peterGriffinFqn = Fqn.fromString("/griffin/peter");

// Create a new Node

Node peterGriffin = rootNode.addChild(peterGriffinFqn);

// let's store some data in the node

peterGriffin.put("isCartoonCharacter", Boolean.TRUE);

peterGriffin.put("favoriteDrink", new Beer());

// some tests (just assume this code is in a JUnit test case)

assertTrue(peterGriffin.get("isCartoonCharacter"));

assertEquals(peterGriffinFqn, peterGriffin.getFqn());

assertTrue(rootNode.hasChild(peterGriffinFqn));

Set keys = new HashSet();

keys.add("isCartoonCharacter");

keys.add("favoriteDrink");

assertEquals(keys, peterGriffin.getKeys());

// let's remove some data from the node

peterGriffin.remove("favoriteDrink");

assertNull(peterGriffin.get("favoriteDrink");

// let's remove the node altogether

rootNode.removeChild(peterGriffinFqn);

assertFalse(rootNode.hasChild(peterGriffinFqn));

The Cache interface also exposes put/get/remove operations that take an

Fqn as an argument, for convenience:

Fqn peterGriffinFqn = Fqn.fromString("/griffin/peter");

cache.put(peterGriffinFqn, "isCartoonCharacter", Boolean.TRUE);

cache.put(peterGriffinFqn, "favoriteDrink", new Beer());

assertTrue(peterGriffin.get(peterGriffinFqn, "isCartoonCharacter"));

assertTrue(cache.getRootNode().hasChild(peterGriffinFqn));

cache.remove(peterGriffinFqn, "favoriteDrink");

assertNull(cache.get(peterGriffinFqn, "favoriteDrink");

cache.removeNode(peterGriffinFqn);

assertFalse(cache.getRootNode().hasChild(peterGriffinFqn));

A Node should be viewed as a named logical grouping of data. A node should be used to contain data for a single data record, for example information about a particular person or account. It should be kept in mind that all aspects of the cache - locking, cache loading, replication and eviction - happen on a per-node basis. As such, anything grouped together by being stored in a single node will be treated as a single atomic unit.

The previous section used the Fqn class in its examples; now let's learn a bit more about

that class.

A Fully Qualified Name (Fqn) encapsulates a list of names which represent a path to a particular location in

the cache's tree structure. The elements in the list are typically Strings but can be

any Object or a mix of different types.

This path can be absolute (i.e., relative to the root node), or relative to any node in the cache. Reading the

documentation on each API call that makes use of Fqn will tell you whether the API expects

a relative or absolute Fqn.

The Fqn class provides are variety of factory methods; see the javadoc for all the

possibilities. The following illustrates the most commonly used approaches to creating an Fqn:

// Create an Fqn pointing to node 'Joe' under parent node 'Smith'

// under the 'people' section of the tree

// Parse it from a String

Fqn abc = Fqn.fromString("/people/Smith/Joe/");

// Here we want to use types other than String

Fqn acctFqn = Fqn.fromElements("accounts", "NY", new Integer(12345));

Note that

Fqn f = Fqn.fromElements("a", "b", "c");

is the same as

Fqn f = Fqn.fromString("/a/b/c");

It is good practice to stop and destroy your cache when you are done using it, particularly if it is a clustered cache and has thus used a JGroups channel. Stopping and destroying a cache ensures resources like network sockets and maintenance threads are properly cleaned up.

cache.stop();

cache.destroy();

Not also that a cache that has had

stop()

invoked

on it can be started again with a new call to

start()

.

Similarly, a cache that has had

destroy()

invoked

on it can be created again with a new call to

create()

(and then started again with a

start()

call).

Although technically not part of the API, the mode in which the cache is configured to

operate affects the cluster-wide behavior of any put or remove

operation, so we'll briefly mention the various modes here.

JBoss Cache modes are denoted by the org.jboss.cache.config.Configuration.CacheMode

enumeration. They consist of:

- LOCAL - local, non-clustered cache. Local caches don't join a cluster and don't communicate with other caches in a cluster.

- REPL_SYNC - synchronous replication. Replicated caches replicate all changes to the other caches in the cluster. Synchronous replication means that changes are replicated and the caller blocks until replication acknowledgements are received.

- REPL_ASYNC - asynchronous replication. Similar to REPL_SYNC above, replicated caches replicate all changes to the other caches in the cluster. Being asynchronous, the caller does not block until replication acknowledgements are received.

- INVALIDATION_SYNC - if a cache is configured for invalidation rather than replication, every time data is changed in a cache other caches in the cluster receive a message informing them that their data is now stale and should be evicted from memory. This reduces replication overhead while still being able to invalidate stale data on remote caches.

- INVALIDATION_ASYNC - as above, except this invalidation mode causes invalidation messages to be broadcast asynchronously.

See the chapter on Clustering for more details on how cache mode affects behavior. See the chapter on Configuration for info on how to configure things like cache mode.

JBoss Cache provides a convenient mechanism for registering notifications on cache events.

Object myListener = new MyCacheListener();

cache.addCacheListener(myListener);

Similar methods exist for removing or querying registered listeners. See the javadocs on the

Cache interface for more details.

Basically any public class can be used as a listener, provided it is annotated with the

@CacheListener annotation. In addition, the class needs to have one or

more methods annotated with one of the method-level annotations (in the

org.jboss.cache.notifications.annotation

package). Methods annotated as such need to be public, have a void return type, and accept a single parameter

of

type

org.jboss.cache.notifications.event.Event

or one of its subtypes.

@CacheStarted- methods annotated such receive a notification when the cache is started. Methods need to accept a parameter type which is assignable fromCacheStartedEvent.@CacheStopped- methods annotated such receive a notification when the cache is stopped. Methods need to accept a parameter type which is assignable fromCacheStoppedEvent.@NodeCreated- methods annotated such receive a notification when a node is created. Methods need to accept a parameter type which is assignable fromNodeCreatedEvent.@NodeRemoved- methods annotated such receive a notification when a node is removed. Methods need to accept a parameter type which is assignable fromNodeRemovedEvent.@NodeModified- methods annotated such receive a notification when a node is modified. Methods need to accept a parameter type which is assignable fromNodeModifiedEvent.@NodeMoved- methods annotated such receive a notification when a node is moved. Methods need to accept a parameter type which is assignable fromNodeMovedEvent.@NodeVisited- methods annotated such receive a notification when a node is started. Methods need to accept a parameter type which is assignable fromNodeVisitedEvent.@NodeLoaded- methods annotated such receive a notification when a node is loaded from aCacheLoader. Methods need to accept a parameter type which is assignable fromNodeLoadedEvent.@NodeEvicted- methods annotated such receive a notification when a node is evicted from memory. Methods need to accept a parameter type which is assignable fromNodeEvictedEvent.@NodeInvalidated- methods annotated such receive a notification when a node is evicted from memory due to a remote invalidation event. Methods need to accept a parameter type which is assignable fromNodeInvalidatedEvent.@NodeActivated- methods annotated such receive a notification when a node is activated. Methods need to accept a parameter type which is assignable fromNodeActivatedEvent.@NodePassivated- methods annotated such receive a notification when a node is passivated. Methods need to accept a parameter type which is assignable fromNodePassivatedEvent.@TransactionRegistered- methods annotated such receive a notification when the cache registers ajavax.transaction.Synchronizationwith a registered transaction manager. Methods need to accept a parameter type which is assignable fromTransactionRegisteredEvent.@TransactionCompleted- methods annotated such receive a notification when the cache receives a commit or rollback call from a registered transaction manager. Methods need to accept a parameter type which is assignable fromTransactionCompletedEvent.@ViewChanged- methods annotated such receive a notification when the group structure of the cluster changes. Methods need to accept a parameter type which is assignable fromViewChangedEvent.@CacheBlocked- methods annotated such receive a notification when the cluster requests that cache operations are blocked for a state transfer event. Methods need to accept a parameter type which is assignable fromCacheBlockedEvent.@CacheUnblocked- methods annotated such receive a notification when the cluster requests that cache operations are unblocked after a state transfer event. Methods need to accept a parameter type which is assignable fromCacheUnblockedEvent.@BuddyGroupChanged- methods annotated such receive a notification when a node changes its buddy group, perhaps due to a buddy falling out of the cluster or a newer, closer buddy joining. Methods need to accept a parameter type which is assignable fromBuddyGroupChangedEvent.

Refer to the javadocs on the annotations as well as the

Event subtypes for details of what is passed in to your method, and when.

Example:

@CacheListener

public class MyListener

{

@CacheStarted

@CacheStopped

public void cacheStartStopEvent(Event e)

{

switch (e.getType())

{

case CACHE_STARTED:

System.out.println("Cache has started");

break;

case CACHE_STOPPED:

System.out.println("Cache has stopped");

break;

}

}

@NodeCreated

@NodeRemoved

@NodeVisited

@NodeModified

@NodeMoved

public void logNodeEvent(NodeEvent ne)

{

log("An event on node " + ne.getFqn() + " has occured");

}

}

By default, all notifications are synchronous, in that they happen on the thread of the caller which generated

the event. As such, it is good practise to ensure cache listener implementations don't hold up the thread in

long-running tasks. Alternatively, you could set the CacheListener.sync() attribute to

false, in which case you will not be notified in the caller's thread. See the

configuration reference on tuning this thread pool and size of blocking

queue.

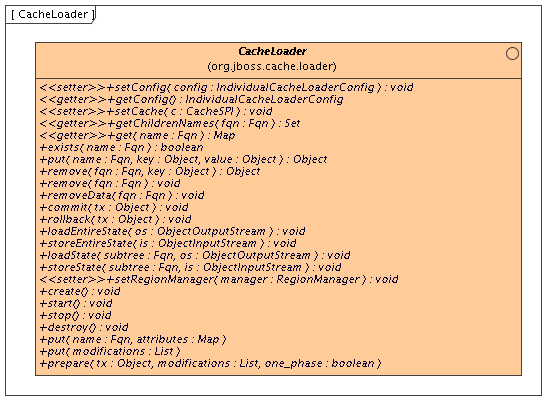

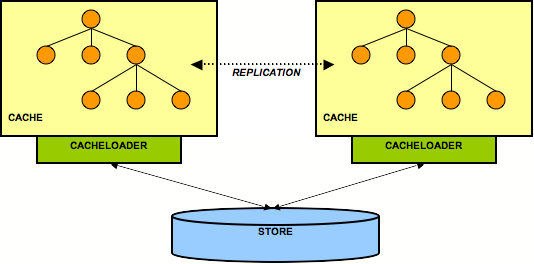

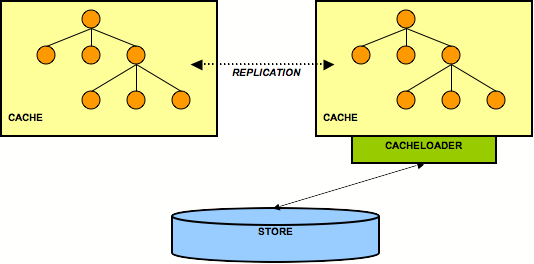

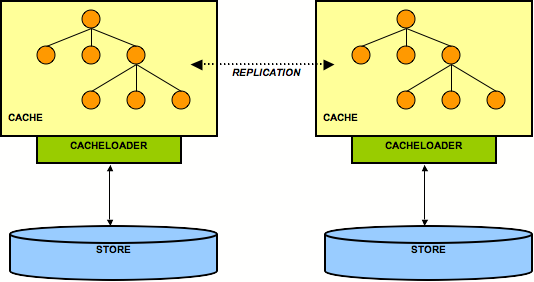

Cache loaders are an important part of JBoss Cache. They allow persistence of nodes to disk or to remote cache clusters, and allow for passivation when caches run out of memory. In addition, cache loaders allow JBoss Cache to perform 'warm starts', where in-memory state can be preloaded from persistent storage. JBoss Cache ships with a number of cache loader implementations.

org.jboss.cache.loader.FileCacheLoader- a basic, filesystem based cache loader that persists data to disk. Non-transactional and not very performant, but a very simple solution. Used mainly for testing and not recommended for production use.org.jboss.cache.loader.JDBCCacheLoader- uses a JDBC connection to store data. Connections could be created and maintained in an internal pool (uses the c3p0 pooling library) or from a configured DataSource. The database this CacheLoader connects to could be local or remotely located.org.jboss.cache.loader.BdbjeCacheLoader- uses Oracle's BerkeleyDB file-based transactional database to persist data. Transactional and very performant, but potentially restrictive license.org.jboss.cache.loader.JdbmCacheLoader- an open source alternative to the BerkeleyDB.org.jboss.cache.loader.tcp.TcpCacheLoader- uses a TCP socket to "persist" data to a remote cluster, using a "far cache" pattern.org.jboss.cache.loader.ClusteredCacheLoader- used as a "read-only" cache loader, where other nodes in the cluster are queried for state. Useful when full state transfer is too expensive and it is preferred that state is lazily loaded.

These cache loaders, along with advanced aspects and tuning issues, are discussed in the chapter dedicated to cache loaders.

Eviction policies are the counterpart to cache loaders. They are necessary to make sure the cache does not run out of memory and when the cache starts to fill, an eviction algorithm running in a separate thread evicts in-memory state and frees up memory. If configured with a cache loader, the state can then be retrieved from the cache loader if needed.

Eviction policies can be configured on a per-region basis, so different subtrees in the cache could have different eviction preferences. JBoss Cache ships with several eviction policies:

org.jboss.cache.eviction.LRUPolicy- an eviction policy that evicts the least recently used nodes when thresholds are hit.org.jboss.cache.eviction.LFUPolicy- an eviction policy that evicts the least frequently used nodes when thresholds are hit.org.jboss.cache.eviction.MRUPolicy- an eviction policy that evicts the most recently used nodes when thresholds are hit.org.jboss.cache.eviction.FIFOPolicy- an eviction policy that creates a first-in-first-out queue and evicts the oldest nodes when thresholds are hit.org.jboss.cache.eviction.ExpirationPolicy- an eviction policy that selects nodes for eviction based on an expiry time each node is configured with.org.jboss.cache.eviction.ElementSizePolicy- an eviction policy that selects nodes for eviction based on the number of key/value pairs held in the node.

Detailed configuration and implementing custom eviction policies are discussed in the chapter dedicated to eviction policies.

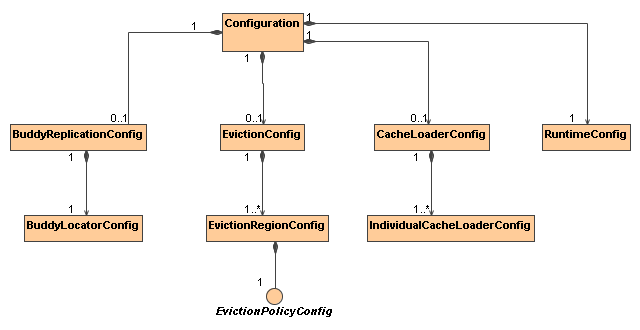

The

org.jboss.cache.config.Configuration

class (along with its component parts)

is a Java Bean that encapsulates the configuration of the Cache

and all of its architectural elements (cache loaders, evictions policies, etc.)

The Configuration exposes numerous properties which

are summarized in the configuration reference

section of this book and many of which are discussed in later chapters. Any time you see a configuration option

discussed in this book, you can assume that the Configuration

class or one of its component parts exposes a simple property setter/getter for that configuration option.

As discussed in the User API section,

before a Cache can be created, the CacheFactory

must be provided with a Configuration object or with a file name or

input stream to use to parse a Configuration from XML. The following sections describe

how to accomplish this.

The most convenient way to configure JBoss Cache is via an XML file. The JBoss Cache distribution ships with a number of configuration files for common use cases. It is recommended that these files be used as a starting point, and tweaked to meet specific needs.

The simplest example of a configuration XML file, a cache configured to run in LOCAL mode, looks like this:

<?xml version="1.0" encoding="UTF-8"?>

<jbosscache xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xmlns="urn:jboss:jbosscache-core:config:3.0">

</jbosscache>

This file uses sensible defaults for isolation levels, lock acquisition timeouts, locking modes, etc. Another, more complete, sample XML file is included in the configuration reference section of this book, along with a handy look-up table explaining the various options.

By default JBoss Cache will validate your XML configuration file against an XML schema and throw an

exception if the configuration is invalid. This can be overridden with the -Djbosscache.config.validate=false

JVM parameter. Alternately, you could specify your own schema to validate against, using the

-Djbosscache.config.schemaLocation=url parameter.

By default though, configuration files are validated against the JBoss Cache configuration schema, which is

included in the jbosscache-core.jar or on http://www.jboss.org/jbosscache/jbosscache-config-3.0.xsd.

Most XML editing tools can be used with this schema to ensure the configuration file you create is correct

and valid.

In addition to the XML-based configuration above, the

Configuration

can be built up programatically,

using the simple property mutators exposed by

Configuration

and its components. When constructed,

the

Configuration

object is preset with JBoss Cache

defaults and can even be used as-is for a quick start.

Configuration config = new Configuration();

config.setTransactionManagerLookupClass( GenericTransactionManagerLookup.class.getName() );

config.setIsolationLevel(IsolationLevel.READ_COMMITTED);

config.setCacheMode(CacheMode.LOCAL);

config.setLockAcquisitionTimeout(15000);

CacheFactory factory = new DefaultCacheFactory();

Cache cache = factory.createCache(config);

Even the above fairly simple configuration is pretty tedious programming;

hence the preferred use of XML-based configuration. However, if your

application requires it, there is no reason not to use XML-based

configuration for most of the attributes, and then access the

Configuration

object to programatically change

a few items from the defaults, add an eviction region, etc.

Note that configuration values may not be changed programmatically when a cache is running,

except those annotated as

@Dynamic

. Dynamic properties are also marked as such in the

configuration reference

table. Attempting to change a non-dynamic

property will result in a

ConfigurationException

.

The

Configuration

class and its

component parts

are all Java Beans that expose all config elements via simple setters

and getters. Therefore, any good IOC framework such as Spring, Google Guice, JBoss Microcontainer, etc. should be able to

build up a

Configuration

from an XML file in

the framework's own format. See the

deployment via the JBoss micrcontainer

section for an example of this.

A

Configuration

is composed of a number of

subobjects:

Following is a brief overview of the components of a

Configuration

. See the javadoc and the linked

chapters in this book for a more complete explanation of the

configurations associated with each component.

Configuration: top level object in the hierarchy; exposes the configuration properties listed in the configuration reference section of this book.BuddyReplicationConfig: only relevant if buddy replication is used. General buddy replication configuration options. Must include a:BuddyLocatorConfig: implementation-specific configuration object for theBuddyLocatorimplementation being used. What configuration elements are exposed depends on the needs of theBuddyLocatorimplementation.EvictionConfig: only relevant if eviction is used. General eviction configuration options. Must include at least one:EvictionRegionConfig: one for each eviction region; names the region, etc. Must include a:EvictionAlgorithmConfig: implementation-specific configuration object for theEvictionAlgorithmimplementation being used. What configuration elements are exposed depends on the needs of theEvictionAlgorithmimplementation.CacheLoaderConfig: only relevant if a cache loader is used. General cache loader configuration options. Must include at least one:IndividualCacheLoaderConfig: implementation-specific configuration object for theCacheLoaderimplementation being used. What configuration elements are exposed depends on the needs of theCacheLoaderimplementation.RuntimeConfig: exposes to cache clients certain information about the cache's runtime environment (e.g. membership in buddy replication groups if buddy replication is used.) Also allows direct injection into the cache of needed external services like a JTATransactionManageror a JGroupsChannelFactory.

Dynamically changing the configuration of

some

options while the cache is running is supported,

by programmatically obtaining the

Configuration

object from the running cache and changing values. E.g.,

Configuration liveConfig = cache.getConfiguration();

liveConfig.setLockAcquisitionTimeout(2000);

A complete listing of which options may be changed dynamically is in the

configuration reference

section. An

org.jboss.cache.config.ConfigurationException

will be thrown if you attempt to change a

setting that is not dynamic.

The Option API allows you to override certain behaviours of the cache on a per invocation basis.

This involves creating an instance of

org.jboss.cache.config.Option

, setting the options

you wish to override on the

Option

object and passing it in the

InvocationContext

before invoking your method on the cache.

E.g., to force a write lock when reading data (when used in a transaction, this provides semantics similar to SELECT FOR UPDATE in a database)

// first start a transaction

cache.getInvocationContext().getOptionOverrides().setForceWriteLock(true);

Node n = cache.getNode(Fqn.fromString("/a/b/c"));

// make changes to the node

// commit transaction

E.g., to suppress replication of a put call in a REPL_SYNC cache:

Node node = cache.getChild(Fqn.fromString("/a/b/c"));

cache.getInvocationContext().getOptionOverrides().setLocalOnly(true);

node.put("localCounter", new Integer(2));

See the javadocs on the

Option

class for details on the options available.

The batching API, introduced in JBoss Cache 3.x, is intended as a mechanism to batch the way calls are replicated independent of JTA transactions.

This is useful when you want to batch up replication calls within a scope finer than that of any ongoing JTA transactions.

To use batching, you need to enable invocation batching in your cache configuration, either on the Configuration object:

Configuration.setInvocationBatchingEnabled(true);

or in your XML file:

<invocationBatching enabled="true"/>

By default, invocation batching is disabled. Note that you do not have to have a transaction manager defined to use batching.

Once you have configured your cache to use batching, you use it by calling startBatch()

and endBatch() on Cache. E.g.,

Cache cache = getCache();

// not using a batch

cache.put("/a", "key", "value"); // will replicate immediately

// using a batch

cache.startBatch();

cache.put("/a", "key", "value");

cache.put("/b", "key", "value");

cache.put("/c", "key", "value");

cache.endBatch(true); // This will now replicate the modifications since the batch was started.

cache.startBatch();

cache.put("/a", "key", "value");

cache.put("/b", "key", "value");

cache.put("/c", "key", "value");

cache.endBatch(false); // This will "discard" changes made in the batch

When used in a standalone Java program, all that needs to be done is to instantiate the cache using the

CacheFactory

and a

Configuration

instance or an XML file, as discussed

in the

User API

and

Configuration

chapters.

The same techniques can be used when an application running in an application

server wishes to programatically deploy a cache rather than relying on an application server's

deployment features. An example of this would be

a webapp deploying a cache via a

javax.servlet.ServletContextListener.

After creation, you could share your cache instance among different application components either by using an IOC container such as Spring, JBoss Microcontainer, etc., or by binding it to JNDI, or simply holding a static reference to the cache.

If, after deploying your cache you wish to expose a management interface to it in JMX, see the section on Programatic Registration in JMX.

Beginning with AS 5, JBoss AS supports deployment of POJO services via

deployment of a file whose name ends with

-beans.xml.

A POJO service is one whose implementation is via a "Plain Old Java Object",

meaning a simple java bean that isn't required to implement any special

interfaces or extend any particular superclass. A

Cache is a POJO service, and all the components in a

Configuration

are also POJOs, so deploying a cache in this way is a natural step.

Deployment of the cache is done using the JBoss Microcontainer that forms the

core of JBoss AS. JBoss Microcontainer is a sophisticated IOC framework

similar to Spring. A -beans.xml file is basically

a descriptor that tells the IOC framework how to assemble the various

beans that make up a POJO service.

For each configurable option exposed by the Configuration

components, a getter/setter must be defined in the configuration class.

This is required so that JBoss Microcontainer can, in typical IOC way,

call these methods when the corresponding properties have been

configured.

You need to ensure that the jbosscache-core.jar and jgroups.jar libraries

are in your server's lib directory. This is usually the case when you use JBoss AS in its

all configuration. Note that you will have to bring in any optional jars you require, such

as jdbm.jar based on your cache configuration.

The following is an example

-beans.xml

file. If you

look in the

server/all/deploy

directory of a JBoss AS 5

installation, you can find several more examples.

<?xml version="1.0" encoding="UTF-8"?>

<deployment xmlns="urn:jboss:bean-deployer:2.0">

<!-- First we create a Configuration object for the cache -->

<bean name="ExampleCacheConfig"

class="org.jboss.cache.config.Configuration">

<!-- Externally injected services -->

<property name="runtimeConfig">

<bean class="org.jboss.cache.config.RuntimeConfig">

<property name="transactionManager">

<inject bean="jboss:service=TransactionManager"

property="TransactionManager"/>

</property>

<property name="muxChannelFactory"><inject bean="JChannelFactory"/></property>

</bean>

</property>

<property name="multiplexerStack">udp</property>

<property name="clusterName">Example-EntityCache</property>

<property name="isolationLevel">REPEATABLE_READ</property>

<property name="cacheMode">REPL_SYNC</property>

<property name="stateRetrievalTimeout">15000</property>

<property name="syncReplTimeout">20000</property>

<property name="lockAcquisitionTimeout">15000</property>

<property name="exposeManagementStatistics">true</property>

</bean>

<!-- Factory to build the Cache. -->

<bean name="DefaultCacheFactory" class="org.jboss.cache.DefaultCacheFactory">

<constructor factoryClass="org.jboss.cache.DefaultCacheFactory"

factoryMethod="getInstance" />

</bean>

<!-- The cache itself -->

<bean name="ExampleCache" class="org.jboss.cache.Cache">

<constructor factoryMethod="createCache">

<factory bean="DefaultCacheFactory"/>

<parameter class="org.jboss.cache.config.Configuration"><inject bean="ExampleCacheConfig"/></parameter>

<parameter class="boolean">false</parameter>

</constructor>

</bean>

</deployment>

See the JBoss Microcontainer documentation

for details on the above syntax. Basically, each

bean

element represents an object and is used to create a

Configuration

and its constituent parts

The DefaultCacheFactory bean constructs the cache,

conceptually doing the same thing as is shown in the

User API chapter.

An interesting thing to note in the above example is the use of the

RuntimeConfig object. External resources like a TransactionManager

and a JGroups ChannelFactory that are visible to the microcontainer are dependency injected

into the RuntimeConfig. The assumption here is that in some other deployment descriptor in

the AS, the referenced beans have already been described.

This feature is not available as of the time of this writing. We will add a wiki page describing how to use it once it becomes available.

JBoss Cache includes JMX MBeans to expose cache functionality and provide statistics that can be used to analyze cache operations. JBoss Cache can also broadcast cache events as MBean notifications for handling via JMX monitoring tools.

JBoss Cache provides an MBean that can be registered with your environments JMX server to allow access

to the cache instance via JMX. This MBean is the

org.jboss.cache.jmx.CacheJmxWrapper.

It is a StandardMBean, so its MBean interface is org.jboss.cache.jmx.CacheJmxWrapperMBean.

This MBean can be used to:

-

Get a reference to the underlying

Cache. -

Invoke create/start/stop/destroy lifecycle operations on the underlying

Cache. - Inspect various details about the cache's current state (number of nodes, lock information, etc.)

- See numerous details about the cache's configuration, and change those configuration items that can be changed when the cache has already been started.

See the CacheJmxWrapperMBean javadoc for more details.

If a CacheJmxWrapper is registered, JBoss Cache also provides MBeans

for several other internal components and subsystems. These MBeans are used to capture and expose

statistics related to the subsystems they represent. They are hierarchically associated with the

CacheJmxWrapper MBean and have service names that reflect this relationship. For

example, a replication interceptor MBean for the jboss.cache:service=TomcatClusteringCache

instance will be accessible through the service named

jboss.cache:service=TomcatClusteringCache,cache-interceptor=ReplicationInterceptor.

The best way to ensure the CacheJmxWrapper is registered in JMX depends on how you are

deploying your cache.

Simplest way to do this is to create your Cache and pass it to the

JmxRegistrationManager constructor.

CacheFactory factory = new DefaultCacheFactory();

// Build but don't start the cache

// (although it would work OK if we started it)

Cache cache = factory.createCache("cache-configuration.xml");

MBeanServer server = getMBeanServer(); // however you do it

ObjectName on = new ObjectName("jboss.cache:service=Cache");

JmxRegistrationManager jmxManager = new JmxRegistrationManager(server, cache, on);

jmxManager.registerAllMBeans();

... use the cache

... on application shutdown

jmxManager.unregisterAllMBeans();

cache.stop();

Alternatively, build a Configuration object and pass it to the

CacheJmxWrapper. The wrapper will construct the Cache on your

behalf.

Configuration config = buildConfiguration(); // whatever it does

CacheJmxWrapperMBean wrapper = new CacheJmxWrapper(config);

MBeanServer server = getMBeanServer(); // however you do it

ObjectName on = new ObjectName("jboss.cache:service=TreeCache");

server.registerMBean(wrapper, on);

// Call to wrapper.create() will build the Cache if one wasn't injected

wrapper.create();

wrapper.start();

// Now that it's built, created and started, get the cache from the wrapper

Cache cache = wrapper.getCache();

... use the cache

... on application shutdown

wrapper.stop();

wrapper.destroy();

CacheJmxWrapper is a POJO, so the microcontainer has no problem creating one. The

trick is getting it to register your bean in JMX. This can be done by specifying the

org.jboss.aop.microcontainer.aspects.jmx.JMX

annotation on the CacheJmxWrapper

bean:

<?xml version="1.0" encoding="UTF-8"?>

<deployment xmlns="urn:jboss:bean-deployer:2.0">

<!-- First we create a Configuration object for the cache -->

<bean name="ExampleCacheConfig"

class="org.jboss.cache.config.Configuration">

... build up the Configuration

</bean>

<!-- Factory to build the Cache. -->

<bean name="DefaultCacheFactory" class="org.jboss.cache.DefaultCacheFactory">

<constructor factoryClass="org.jboss.cache.DefaultCacheFactory"

factoryMethod="getInstance" />

</bean>

<!-- The cache itself -->

<bean name="ExampleCache" class="org.jboss.cache.CacheImpl">

<constructor factoryMethod="createnewInstance">

<factory bean="DefaultCacheFactory"/>

<parameter><inject bean="ExampleCacheConfig"/></parameter>

<parameter>false</parameter>

</constructor>

</bean>

<!-- JMX Management -->

<bean name="ExampleCacheJmxWrapper" class="org.jboss.cache.jmx.CacheJmxWrapper">

<annotation>@org.jboss.aop.microcontainer.aspects.jmx.JMX(name="jboss.cache:service=ExampleTreeCache",

exposedInterface=org.jboss.cache.jmx.CacheJmxWrapperMBean.class,

registerDirectly=true)</annotation>

<constructor>

<parameter><inject bean="ExampleCache"/></parameter>

</constructor>

</bean>

</deployment>

As discussed in the Programatic Registration

section, CacheJmxWrapper can do the work of building, creating and starting the

Cache if it is provided with a Configuration. With the

microcontainer, this is the preferred approach, as it saves the boilerplate XML

needed to create the CacheFactory.

<?xml version="1.0" encoding="UTF-8"?>

<deployment xmlns="urn:jboss:bean-deployer:2.0">

<!-- First we create a Configuration object for the cache -->

<bean name="ExampleCacheConfig"

class="org.jboss.cache.config.Configuration">

... build up the Configuration

</bean>

<bean name="ExampleCache" class="org.jboss.cache.jmx.CacheJmxWrapper">

<annotation>@org.jboss.aop.microcontainer.aspects.jmx.JMX(name="jboss.cache:service=ExampleTreeCache",

exposedInterface=org.jboss.cache.jmx.CacheJmxWrapperMBean.class,

registerDirectly=true)</annotation>

<constructor>

<parameter><inject bean="ExampleCacheConfig"/></parameter>

</constructor>

</bean>

</deployment>

JBoss Cache captures statistics in its interceptors and various other components, and exposes these

statistics through a set of MBeans. Gathering of statistics is enabled by default; this can be disabled for

a specific cache instance through the Configuration.setExposeManagementStatistics()

setter. Note that the majority of the statistics are provided by the CacheMgmtInterceptor,

so this MBean is the most significant in this regard. If you want to disable all statistics for performance

reasons, you set Configuration.setExposeManagementStatistics(false) and this will

prevent the CacheMgmtInterceptor from being included in the cache's interceptor stack

when the cache is started.

If a CacheJmxWrapper is registered with JMX, the wrapper also ensures that

an MBean is registered in JMX for each interceptor and component that exposes statistics.

[1].

Management tools can then access those MBeans to examine the statistics. See the section in the

JMX Reference chapter

pertaining to the statistics that are made available via JMX.

JBoss Cache users can register a listener to receive cache events described earlier in the

User API

chapter. Users can alternatively utilize the cache's management information infrastructure to receive these

events via JMX notifications. Cache events are accessible as notifications by registering a

NotificationListener for the CacheJmxWrapper.

See the section in the JMX Reference chapter

pertaining to JMX notifications for a list of notifications that can be received through the

CacheJmxWrapper.

The following is an example of how to programmatically receive cache notifications when running in a JBoss AS environment. In this example, the client uses a filter to specify which events are of interest.

MyListener listener = new MyListener();

NotificationFilterSupport filter = null;

// get reference to MBean server

Context ic = new InitialContext();

MBeanServerConnection server = (MBeanServerConnection)ic.lookup("jmx/invoker/RMIAdaptor");

// get reference to CacheMgmtInterceptor MBean

String cache_service = "jboss.cache:service=TomcatClusteringCache";

ObjectName mgmt_name = new ObjectName(cache_service);

// configure a filter to only receive node created and removed events

filter = new NotificationFilterSupport();

filter.disableAllTypes();

filter.enableType(CacheNotificationBroadcaster.NOTIF_NODE_CREATED);

filter.enableType(CacheNotificationBroadcaster.NOTIF_NODE_REMOVED);

// register the listener with a filter

// leave the filter null to receive all cache events

server.addNotificationListener(mgmt_name, listener, filter, null);

// ...

// on completion of processing, unregister the listener

server.removeNotificationListener(mgmt_name, listener, filter, null);

The following is the simple notification listener implementation used in the previous example.

private class MyListener implements NotificationListener, Serializable

{

public void handleNotification(Notification notification, Object handback)

{

String message = notification.getMessage();

String type = notification.getType();

Object userData = notification.getUserData();

System.out.println(type + ": " + message);

if (userData == null)

{

System.out.println("notification data is null");

}

else if (userData instanceof String)

{

System.out.println("notification data: " + (String) userData);

}

else if (userData instanceof Object[])

{

Object[] ud = (Object[]) userData;

for (Object data : ud)

{

System.out.println("notification data: " + data.toString());

}

}

else

{

System.out.println("notification data class: " + userData.getClass().getName());

}

}

}

Note that the JBoss Cache management implementation only listens to cache events after a client registers to receive MBean notifications. As soon as no clients are registered for notifications, the MBean will remove itself as a cache listener.

JBoss Cache MBeans are easily accessed when running cache instances in an application server that provides an MBean server interface such as JBoss JMX Console. Refer to your server documentation for instructions on how to access MBeans running in a server's MBean container.

In addition, though, JBoss Cache MBeans are also accessible when running in a non-server environment using

your JDK's jconsole tool. When running a standalone cache outside of an application server,

you can access the cache's MBeans as follows.

-

Set the system property

-Dcom.sun.management.jmxremotewhen starting the JVM where the cache will run. -

Once the JVM is running, start the

jconsoleutility, located in your JDK's/bindirectory. - When the utility loads, you will be able to select your running JVM and connect to it. The JBoss Cache MBeans will be available on the MBeans panel.

Note that the jconsole utility will automatically register as a listener for cache

notifications when connected to a JVM running JBoss Cache instances.

[1]

Note that if the

CacheJmxWrapper

is not registered in JMX, the

interceptor MBeans will not be registered either. The JBoss Cache 1.4 releases

included code that would try to "discover" an

MBeanServer

and

automatically register the interceptor MBeans with it. For JBoss Cache 2.x we decided

that this sort of "discovery" of the JMX environment was beyond the proper scope of

a caching library, so we removed this functionality.

Within a major version, releases of JBoss Cache are meant to be compatible and interoperable. Compatible in the sense that it should be possible to upgrade an application from one version to another by simply replacing jars. Interoperable in the sense that if two different versions of JBoss Cache are used in the same cluster, they should be able to exchange replication and state transfer messages. Note however that interoperability requires use of the same JGroups version in all nodes in the cluster. In most cases, the version of JGroups used by a version of JBoss Cache can be upgraded.

As such, JBoss Cache 2.x.x is not API or binary compatible with prior 1.x.x versions. On the other hand, JBoss Cache 2.1.x will be API and binary compatible with 2.0.x.

We have made best efforts, however, to keep JBoss Cache 3.x both binary and API compatible with 2.x. Still, it is recommended that client code is updated not to use deprecated methods, classes and configuration files.

A configuration parameter, Configuration.setReplicationVersion(), is available and is used

to control the wire format of inter-cache communications. They can be wound back from more

efficient and newer protocols to "compatible" versions when talking to older releases.

This mechanism allows us to improve JBoss Cache by using more efficient wire formats while

still providing a means to preserve interoperability.

A compatibility matrix is maintained on the JBoss Cache website, which contains information on different versions of JBoss Cache, JGroups and JBoss Application Server.

This section digs deeper into the JBoss Cache architecture, and is meant for developers wishing to use the more advanced cache features,extend or enhance the cache, write plugins, or are just looking for detailed knowledge of how things work under the hood.

Table of Contents

- 7. Architecture

- 8. Cache Modes and Clustering

- 9. Cache Loaders

- 10. Eviction

- 11. Transactions and Concurrency

A Cache consists of a collection of Node instances, organised in a tree

structure. Each Node contains a Map which holds the data

objects to be cached. It is important to note that the structure is a mathematical tree, and not a graph; each

Node has one and only one parent, and the root node is denoted by the constant fully qualified

name, Fqn.ROOT.

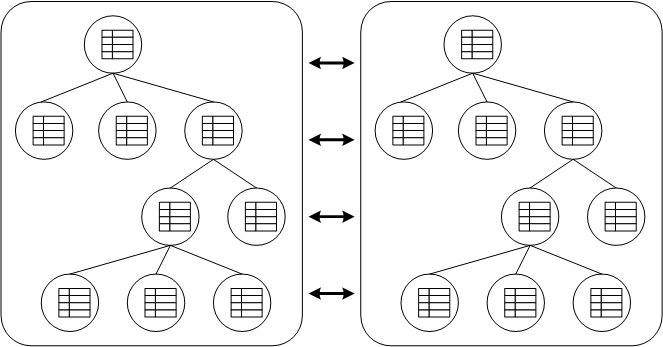

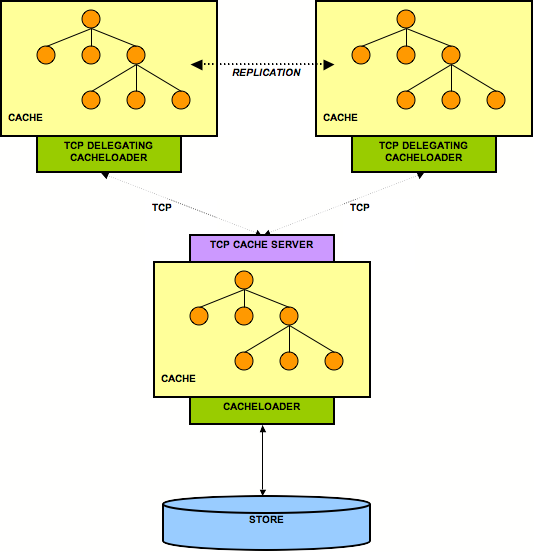

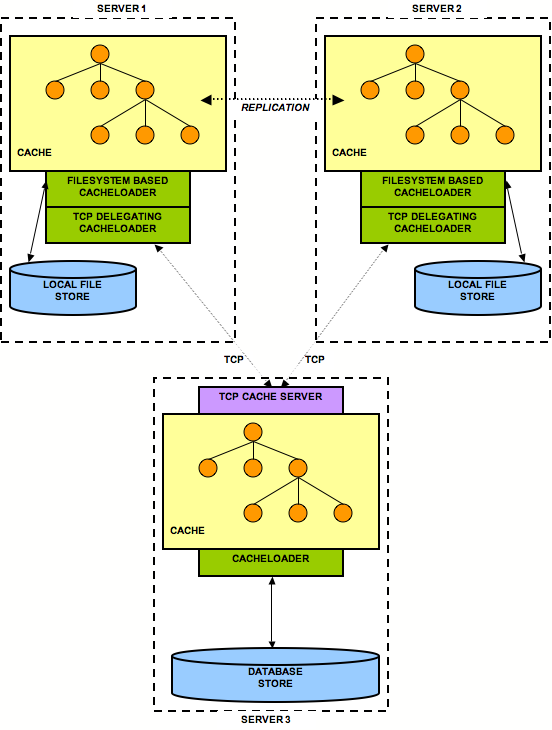

In the diagram above, each box represents a JVM. You see 2 caches in separate JVMs, replicating data to each

other.

Any modifications (see API chapter) in one cache instance will be replicated to the other cache. Naturally, you can have more than 2 caches in a cluster. Depending on the transactional settings, this replication will occur either after each modification or at the end of a transaction, at commit time. When a new cache is created, it can optionally acquire the contents from one of the existing caches on startup.

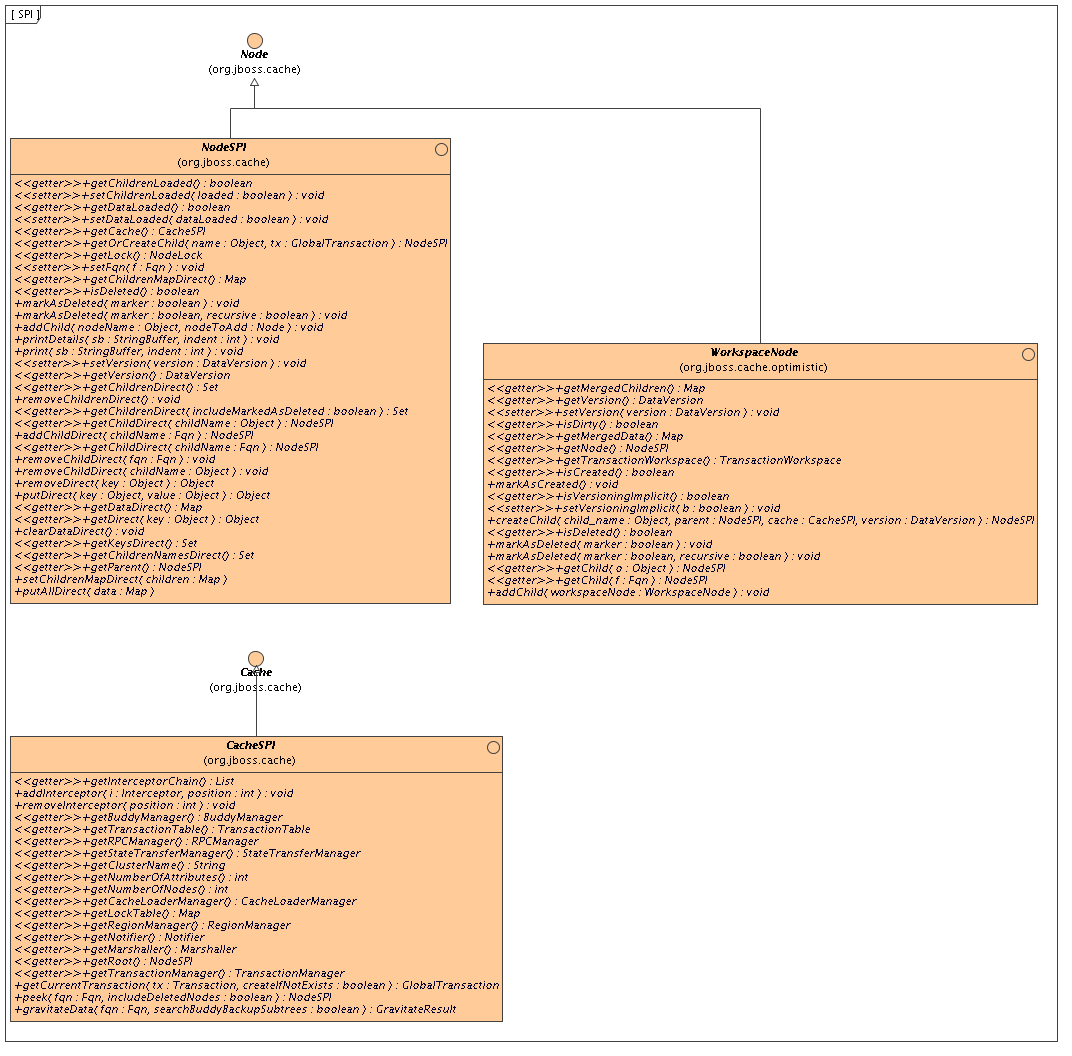

In addition to Cache and Node interfaces, JBoss Cache exposes more

powerful CacheSPI and NodeSPI interfaces, which offer more control over

the internals of JBoss Cache. These interfaces are not intended for general use, but are designed for people

who wish to extend and enhance JBoss Cache, or write custom Interceptor or

CacheLoader instances.

The CacheSPI interface cannot be created, but is injected into Interceptor

and CacheLoader implementations by the setCache(CacheSPI cache)

methods on these interfaces. CacheSPI extends Cache

so all the functionality of the basic API is also available.

Similarly, a NodeSPI interface cannot be created. Instead, one is obtained by performing

operations on CacheSPI, obtained as above. For example, Cache.getRoot() : Node

is overridden as CacheSPI.getRoot() : NodeSPI.

It is important to note that directly casting a Cache or Node

to its SPI counterpart is not recommended and is bad practice, since the inheritace of interfaces it is not a

contract that is guaranteed to be upheld moving forward. The exposed public APIs, on the other hand, is

guaranteed to be upheld.

Since the cache is essentially a collection of nodes, aspects such as clustering, persistence, eviction, etc. need to be applied to these nodes when operations are invoked on the cache as a whole or on individual nodes. To achieve this in a clean, modular and extensible manner, an interceptor chain is used. The chain is built up of a series of interceptors, each one adding an aspect or particular functionality. The chain is built when the cache is created, based on the configuration used.

It is important to note that the NodeSPI offers some methods (such as the xxxDirect()

method family) that operate on a node directly without passing through the interceptor stack. Plugin authors

should note that using such methods will affect the aspects of the cache that may need to be applied, such as

locking, replication, etc. To put it simply, don't use such methods unless you really

know what you're doing!

JBoss Cache essentially is a core data structure - an implementation of DataContainer - and

aspects and features are implemented using interceptors in front of this data structure. A

CommandInterceptor is an abstract class, interceptor implementations extend this.

CommandInterceptor implements the Visitor interface so it is able to

alter commands in a strongly typed manner as the command makes its way to the data structure. More on

visitors and commands in the next section.

Interceptor implementations are chained together in the InterceptorChain class, which

dispatches a command across the chain of interceptors. A special interceptor, the CallInterceptor,

always sits at the end of this chain to invoke the command being passed up the chain by calling the

command's process() method.

JBoss Cache ships with several interceptors, representing different behavioral aspects, some of which are:

TxInterceptor- looks for ongoing transactions and registers with transaction managers to participate in synchronization eventsReplicationInterceptor- replicates state across a cluster using the RpcManager classCacheLoaderInterceptor- loads data from a persistent store if the data requested is not available in memory

The interceptor chain configured for your cache instance can be obtained and inspected by calling

CacheSPI.getInterceptorChain(), which returns an ordered List

of interceptors in the order in which they would be encountered by a command.

Custom interceptors to add specific aspects or features can be written by extending

CommandInterceptor and overriding the relevant

visitXXX() methods based on the commands you are interested in intercepting. There

are other abstract interceptors you could extend instead, such as the PrePostProcessingCommandInterceptor

and the SkipCheckChainedInterceptor. Please see their respective javadocs for details

on the extra features provided.

The custom interceptor will need to be added to the interceptor chain by using the

Cache.addInterceptor() methods. See the javadocs on these methods for details.

Adding custom interceptors via XML is also supported, please see the XML configuration reference for details.

Internally, JBoss Cache uses a command/visitor pattern to execute API calls. Whenever a method is called

on the cache interface, the CacheInvocationDelegate, which implements the Cache

interface, creates an instance of VisitableCommand and dispatches this command up a chain of

interceptors. Interceptors, which implement the Visitor interface, are able to handle

VisitableCommands they are interested in, and add behavior to the command.

Each command contains all knowledge of the command being executed such as parameters used and processing

behavior, encapsulated in a process() method. For example, the RemoveNodeCommand

is created and passed up the interceptor chain when Cache.removeNode() is called, and

RemoveNodeCommand.process() has the necessary knowledge of how to remove a node from

the data structure.

In addition to being visitable, commands are also replicable. The JBoss Cache marshallers know how to efficiently marshall commands and invoke them on remote cache instances using an internal RPC mechanism based on JGroups.

InvocationContext

holds intermediate state for the duration of a single invocation, and is set up and

destroyed by the

InvocationContextInterceptor

which sits at the start of the interceptor chain.

InvocationContext

, as its name implies, holds contextual information associated with a single cache

method invocation. Contextual information includes associated

javax.transaction.Transaction

or

org.jboss.cache.transaction.GlobalTransaction

,

method invocation origin (

InvocationContext.isOriginLocal()

) as well as

Option

overrides

, and information around which nodes have been locked, etc.

The

InvocationContext

can be obtained by calling

Cache.getInvocationContext().

Some aspects and functionality is shared by more than a single interceptor. Some of these have been

encapsulated

into managers, for use by various interceptors, and are made available by the

CacheSPI

interface.

This class is responsible for calls made via the JGroups channel for all RPC calls to remote caches, and encapsulates the JGroups channel used.

This class manages buddy groups and invokes group organization remote calls to organize a cluster of caches into smaller sub-groups.

Early versions of JBoss Cache simply wrote cached data to the network by writing to an

ObjectOutputStream

during replication. Over various releases in the JBoss Cache 1.x.x series this approach was gradually

deprecated

in favor of a more mature marshalling framework. In the JBoss Cache 2.x.x series, this is the only officially

supported and recommended mechanism for writing objects to datastreams.

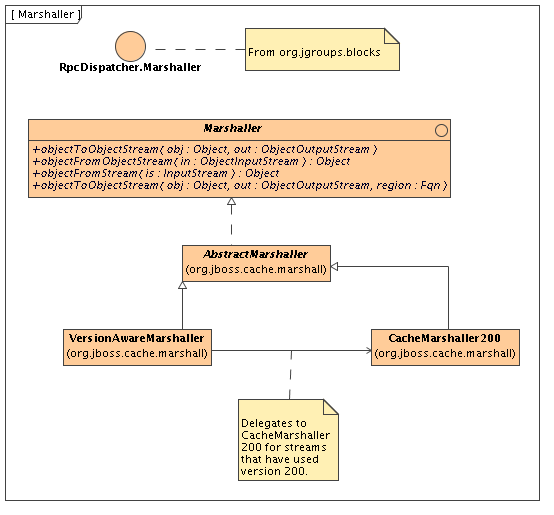

The

Marshaller

interface extends

RpcDispatcher.Marshaller

from JGroups.

This interface has two main implementations - a delegating

VersionAwareMarshaller

and a

concrete

CacheMarshaller300

.

The marshaller can be obtained by calling

CacheSPI.getMarshaller(), and defaults to the

VersionAwareMarshaller.

Users may also write their own marshallers by implementing the

Marshaller

interface or extending the AbstractMarshaller class, and adding it to their configuration

by using the Configuration.setMarshallerClass() setter.

As the name suggests, this marshaller adds a version

short

to the start of any stream when

writing, enabling similar

VersionAwareMarshaller

instances to read the version short and

know which specific marshaller implementation to delegate the call to.

For example,

CacheMarshaller200

is the marshaller for JBoss Cache 2.0.x.

JBoss Cache 3.0.x ships with

CacheMarshaller300

with an improved wire protocol. Using a

VersionAwareMarshaller

helps achieve wire protocol compatibility between minor

releases but still affords us the flexibility to tweak and improve the wire protocol between minor or micro

releases.

When used to cluster state of application servers, applications deployed in the application tend to put

instances

of objects specific to their application in the cache (or in an

HttpSession

object) which

would require replication. It is common for application servers to assign separate

ClassLoader

instances to each application deployed, but have JBoss Cache libraries referenced by the application server's

ClassLoader.

To enable us to successfully marshall and unmarshall objects from such class loaders, we use a concept called regions. A region is a portion of the cache which share a common class loader (a region also has other uses - see eviction policies).

A region is created by using the

Cache.getRegion(Fqn fqn, boolean createIfNotExists)

method,

and returns an implementation of the

Region

interface. Once a region is obtained, a

class loader for the region can be set or unset, and the region can be activated/deactivated. By default,

regions

are active unless the

InactiveOnStartup

configuration attribute is set to

true.

This chapter talks about aspects around clustering JBoss Cache.

JBoss Cache can be configured to be either local (standalone) or clustered. If in a cluster, the cache can be configured to replicate changes, or to invalidate changes. A detailed discussion on this follows.

Local caches don't join a cluster and don't communicate with other caches in a cluster. The dependency on the JGroups library is still there, although a JGroups channel is not started.

Replicated caches replicate all changes to some or all of the other cache instances in the cluster. Replication can either happen after each modification (no transactions or batches), or at the end of a transaction or batch.

Replication can be synchronous or asynchronous. Use of either one

of the options is application dependent. Synchronous replication blocks

the caller (e.g. on a

put()

) until the modifications

have been replicated successfully to all nodes in a cluster.

Asynchronous replication performs replication in the background (the

put()

returns immediately). JBoss Cache also offers a

replication queue, where modifications are replicated periodically (i.e.

interval-based), or when the queue size exceeds a number of elements, or

a combination thereof. A replication queue can therefore offer much higher performance as the actual

replication is performed by a background thread.

Asynchronous replication is faster (no caller blocking), because synchronous replication requires acknowledgments from all nodes in a cluster that they received and applied the modification successfully (round-trip time). However, when a synchronous replication returns successfully, the caller knows for sure that all modifications have been applied to all cache instances, whereas this is not be the case with asynchronous replication. With asynchronous replication, errors are simply written to a log. Even when using transactions, a transaction may succeed but replication may not succeed on all cache instances.

When using transactions, replication only occurs at the transaction boundary - i.e., when a transaction commits. This results in minimizing replication traffic since a single modification is broadcast rather than a series of individual modifications, and can be a lot more efficient than not using transactions. Another effect of this is that if a transaction were to roll back, nothing is broadcast across a cluster.

Depending on whether you are running your cluster in asynchronous or synchronous mode, JBoss Cache will use either a single phase or two phase commit protocol, respectively.

Used when your cache mode is REPL_ASYNC. All modifications are replicated in a single call, which instructs remote caches to apply the changes to their local in-memory state and commit locally. Remote errors/rollbacks are never fed back to the originator of the transaction since the communication is asynchronous.

Used when your cache mode is REPL_SYNC. Upon committing your transaction, JBoss Cache broadcasts a prepare call, which carries all modifications relevant to the transaction. Remote caches then acquire local locks on their in-memory state and apply the modifications. Once all remote caches respond to the prepare call, the originator of the transaction broadcasts a commit. This instructs all remote caches to commit their data. If any of the caches fail to respond to the prepare phase, the originator broadcasts a rollback.

Note that although the prepare phase is synchronous, the

commit and rollback phases are asynchronous. This is because

Sun's JTA

specification

does not specify how transactional resources

should deal with failures at this stage of a transaction; and other

resources participating in the transaction may have indeterminate

state anyway. As such, we do away with the overhead of synchronous

communication for this phase of the transaction. That said, they can

be forced to be synchronous using the

SyncCommitPhase

and

SyncRollbackPhase

configuration

attributes.

Buddy Replication allows you to suppress replicating your data to all instances in a cluster. Instead, each instance picks one or more 'buddies' in the cluster, and only replicates to these specific buddies. This greatly helps scalability as there is no longer a memory and network traffic impact every time another instance is added to a cluster.

One of the most common use cases of Buddy Replication is when a replicated cache is used by a servlet container to store HTTP session data. One of the pre-requisites to buddy replication working well and being a real benefit is the use of session affinity , more casually known as sticky sessions in HTTP session replication speak. What this means is that if certain data is frequently accessed, it is desirable that this is always accessed on one instance rather than in a round-robin fashion as this helps the cache cluster optimize how it chooses buddies, where it stores data, and minimizes replication traffic.

If this is not possible, Buddy Replication may prove to be more of an overhead than a benefit.

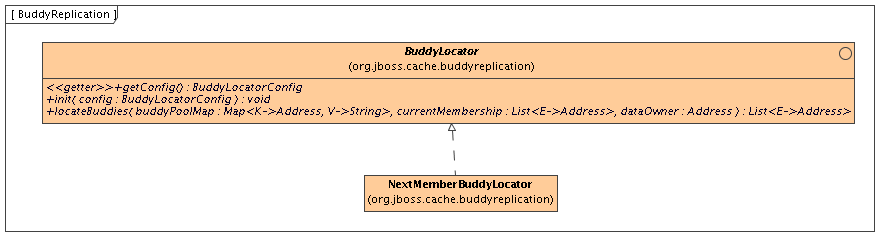

Buddy Replication uses an instance of a

BuddyLocator

which contains the logic used to

select buddies in a network. JBoss Cache currently ships with a

single implementation,

NextMemberBuddyLocator

,

which is used as a default if no implementation is provided. The

NextMemberBuddyLocator

selects the next member in

the cluster, as the name suggests, and guarantees an even spread of

buddies for each instance.

The

NextMemberBuddyLocator

takes in 2

parameters, both optional.

numBuddies- specifies how many buddies each instance should pick to back its data onto. This defaults to 1.ignoreColocatedBuddies- means that each instance will try to select a buddy on a different physical host. If not able to do so though, it will fall back to co-located instances. This defaults totrue.

Also known as

replication groups

, a buddy

pool is an optional construct where each instance in a cluster may

be configured with a buddy pool name. Think of this as an 'exclusive

club membership' where when selecting buddies,

BuddyLocator

s that support buddy pools would try

and select buddies sharing the same buddy pool name. This allows

system administrators a degree of flexibility and control over how

buddies are selected. For example, a sysadmin may put two instances

on two separate physical servers that may be on two separate

physical racks in the same buddy pool. So rather than picking an

instance on a different host on the same rack,

BuddyLocator

s would rather pick the instance in

the same buddy pool, on a separate rack which may add a degree of

redundancy.

In the unfortunate event of an instance crashing, it is assumed that the client connecting to the cache (directly or indirectly, via some other service such as HTTP session replication) is able to redirect the request to any other random cache instance in the cluster. This is where a concept of Data Gravitation comes in.

Data Gravitation is a concept where if a request is made on a cache in the cluster and the cache does not contain this information, it asks other instances in the cluster for the data. In other words, data is lazily transferred, migrating only when other nodes ask for it. This strategy prevents a network storm effect where lots of data is pushed around healthy nodes because only one (or a few) of them die.

If the data is not found in the primary section of some node, it would (optionally) ask other instances to check in the backup data they store for other caches. This means that even if a cache containing your session dies, other instances will still be able to access this data by asking the cluster to search through their backups for this data.

Once located, this data is transferred to the instance which requested it and is added to this instance's data tree. The data is then (optionally) removed from all other instances (and backups) so that if session affinity is used, the affinity should now be to this new cache instance which has just taken ownership of this data.

Data Gravitation is implemented as an interceptor. The following (all optional) configuration properties pertain to data gravitation.

dataGravitationRemoveOnFind- forces all remote caches that own the data or hold backups for the data to remove that data, thereby making the requesting cache the new data owner. This removal, of course, only happens after the new owner finishes replicating data to its buddy. If set tofalsean evict is broadcast instead of a remove, so any state persisted in cache loaders will remain. This is useful if you have a shared cache loader configured. Defaults totrue.dataGravitationSearchBackupTrees- Asks remote instances to search through their backups as well as main data trees. Defaults totrue. The resulting effect is that if this istruethen backup nodes can respond to data gravitation requests in addition to data owners.autoDataGravitation- Whether data gravitation occurs for every cache miss. By default this is set tofalseto prevent unnecessary network calls. Most use cases will know when it may need to gravitate data and will pass in anOptionto enable data gravitation on a per-invocation basis. IfautoDataGravitationistruethisOptionis unnecessary.

If a cache is configured for invalidation rather than replication, every time data is changed in a cache other caches in the cluster receive a message informing them that their data is now stale and should be evicted from memory. Invalidation, when used with a shared cache loader (see chapter on cache loaders) would cause remote caches to refer to the shared cache loader to retrieve modified data. The benefit of this is twofold: network traffic is minimized as invalidation messages are very small compared to replicating updated data, and also that other caches in the cluster look up modified data in a lazy manner, only when needed.

Invalidation messages are sent after each modification (no transactions or batches), or at the end of a transaction or batch, upon successful commit. This is usually more efficient as invalidation messages can be optimized for the transaction as a whole rather than on a per-modification basis.

Invalidation too can be synchronous or asynchronous, and just as in the case of replication, synchronous invalidation blocks until all caches in the cluster receive invalidation messages and have evicted stale data while asynchronous invalidation works in a 'fire-and-forget' mode, where invalidation messages are broadcast but doesn't block and wait for responses.

State Transfer refers to the process by which a JBoss Cache instance prepares itself to begin providing a service by acquiring the current state from another cache instance and integrating that state into its own state.

There are three divisions of state transfer types depending on a point of view related to state transfer. First, in the context of particular state transfer implementation, the underlying plumbing, there are two starkly different state transfer types: byte array and streaming based state transfer. Second, state transfer can be full or partial state transfer depending on a subtree being transferred. Entire cache tree transfer represents full transfer while transfer of a particular subtree represents partial state transfer. And finally state transfer can be "in-memory" and "persistent" transfer depending on a particular use of cache.

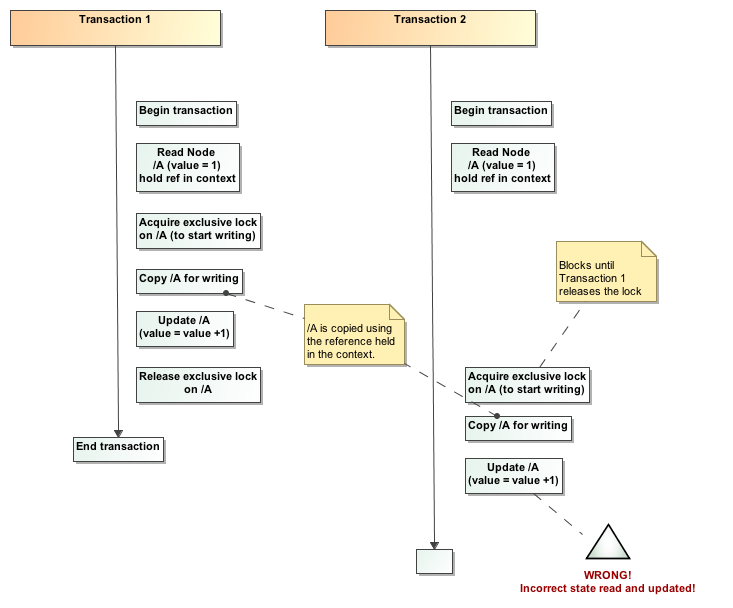

Byte array based transfer was a default and only transfer methodology for cache in all previous releases up to 2.0. Byte array based transfer loads entire state transferred into a byte array and sends it to a state receiving member. Major limitation of this approach is that the state transfer that is very large (>1GB) would likely result in OutOfMemoryException. Streaming state transfer provides an InputStream to a state reader and an OutputStream to a state writer. OutputStream and InputStream abstractions enable state transfer in byte chunks thus resulting in smaller memory requirements. For example, if application state is represented as a tree whose aggregate size is 1GB, rather than having to provide a 1GB byte array streaming state transfer transfers the state in chunks of N bytes where N is user configurable.