Preface

Working with both Object-Oriented software and Relational Databases can be cumbersome and time-consuming. Development costs are significantly higher due to a paradigm mismatch between how data is represented in objects versus relational databases. Hibernate is an Object/Relational Mapping solution for Java environments. The term Object/Relational Mapping refers to the technique of mapping data from an object model representation to a relational data model representation (and vice versa).

Hibernate not only takes care of the mapping from Java classes to database tables (and from Java data types to SQL data types), but also provides data query and retrieval facilities. It can significantly reduce development time otherwise spent with manual data handling in SQL and JDBC. Hibernate’s design goal is to relieve the developer from 95% of common data persistence-related programming tasks by eliminating the need for manual, hand-crafted data processing using SQL and JDBC. However, unlike many other persistence solutions, Hibernate does not hide the power of SQL from you and guarantees that your investment in relational technology and knowledge is as valid as always.

Hibernate may not be the best solution for data-centric applications that only use stored-procedures to implement the business logic in the database, it is most useful with object-oriented domain models and business logic in the Java-based middle-tier. However, Hibernate can certainly help you to remove or encapsulate vendor-specific SQL code and will help with the common task of result set translation from a tabular representation to a graph of objects.

Getting Started

While a strong background in SQL is not required to use Hibernate, a basic understanding of its concepts is useful - especially the principles of data modeling. Understanding the basics of transactions and design patterns such as Unit of Work are important as well.

|

New users may want to first look at the tutorial-style Quick Start guide. This User Guide is really more of a reference guide. For a more high-level discussion of the most used features of Hibernate, see the Introduction to Hibernate guide. There is also a series of topical guides providing deep dives into various topics such as logging, compatibility and support, etc. |

Get Involved

-

Use Hibernate and report any bugs or issues you find. See Issue Tracker for details.

-

Try your hand at fixing some bugs or implementing enhancements. Again, see Issue Tracker.

-

Engage with the community using the methods listed in the Community section.

-

Help improve this documentation. Contact us on the developer mailing list or Zulip if you have interest.

-

Spread the word. Let the rest of your organization know about the benefits of Hibernate.

1. Compatibility

1.1. Dependencies

Hibernate 6.5.3.Final requires the following dependencies (among others):

Version |

|

|---|---|

Java Runtime |

11, 17 or 21 |

3.1.0 |

|

JDBC (bundled with the Java Runtime) |

4.2 |

|

Find more information for all versions of Hibernate on our compatibility matrix. The compatibility policy may also be of interest. |

If you get Hibernate from Maven Central, it is recommended to import Hibernate Platform as part of your dependency management to keep all its artifact versions aligned.

- Gradle

dependencies {

implementation platform "org.hibernate.orm:hibernate-platform:6.5.3.Final"

// use the versions from the platform

implementation "org.hibernate.orm:hibernate-core"

implementation "jakarta.transaction:jakarta.transaction-api"

}- Maven

<dependencyManagement>

<dependencies>

<dependency>

<groupId>org.hibernate.orm</groupId>

<artifactId>hibernate-platform</artifactId>

<version>6.5.3.Final</version>

<type>pom</type>

<scope>import</scope>

</dependency>

</dependencies>

</dependencyManagement>

<!-- use the versions from the platform -->

<dependencies>

<dependency>

<groupId>org.hibernate.orm</groupId>

<artifactId>hibernate-core</artifactId>

</dependency>

<dependency>

<groupId>jakarta.transaction</groupId>

<artifactId>jakarta.transaction-api</artifactId>

</dependency>

</dependencies>1.2. Database

Hibernate 6.5.3.Final is compatible with the following database versions, provided you use the corresponding dialects:

| Dialect | Minimum Database Version |

|---|---|

CockroachDialect |

22.2 |

DB2Dialect |

10.5 |

DB2iDialect |

7.1 |

DB2zDialect |

12.1 |

DerbyDialect |

10.15.2 |

GenericDialect |

0.0 |

H2Dialect |

2.1.214 |

HANADialect |

1.0.120 |

HSQLDialect |

2.6.1 |

MariaDBDialect |

10.4 |

MySQLDialect |

8.0 |

OracleDialect |

19.0 |

PostgreSQLDialect |

12.0 |

PostgresPlusDialect |

12.0 |

SQLServerDialect |

11.0 |

SpannerDialect |

0.0 |

SybaseASEDialect |

16.0 |

SybaseDialect |

16.0 |

TiDBDialect |

5.4 |

2. Architecture

2.1. Overview

Hibernate, as an ORM solution, effectively "sits between" the Java application data access layer and the Relational Database, as can be seen in the diagram above. The Java application makes use of the Hibernate APIs to load, store, query, etc. its domain data. Here we will introduce the essential Hibernate APIs. This will be a brief introduction; we will discuss these contracts in detail later.

As a Jakarta Persistence provider, Hibernate implements the Java Persistence API specifications and the association between Jakarta Persistence interfaces and Hibernate specific implementations can be visualized in the following diagram:

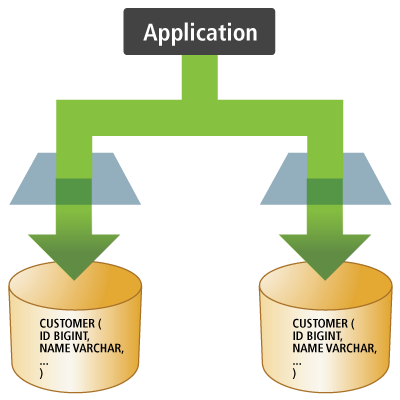

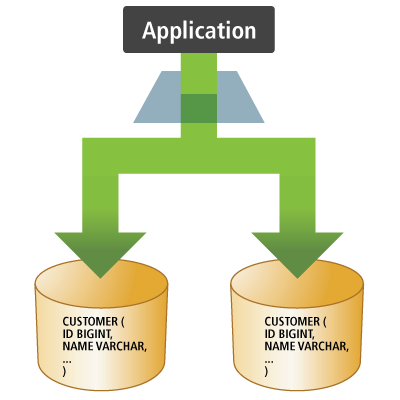

- SessionFactory (

org.hibernate.SessionFactory) -

A thread-safe (and immutable) representation of the mapping of the application domain model to a database. Acts as a factory for

org.hibernate.Sessioninstances. TheEntityManagerFactoryis the Jakarta Persistence equivalent of aSessionFactoryand basically, those two converge into the sameSessionFactoryimplementation.A

SessionFactoryis very expensive to create, so, for any given database, the application should have only one associatedSessionFactory. TheSessionFactorymaintains services that Hibernate uses across allSession(s)such as second level caches, connection pools, transaction system integrations, etc. - Session (

org.hibernate.Session) -

A single-threaded, short-lived object conceptually modeling a "Unit of Work" (PoEAA). In Jakarta Persistence nomenclature, the

Sessionis represented by anEntityManager.Behind the scenes, the Hibernate

Sessionwraps a JDBCjava.sql.Connectionand acts as a factory fororg.hibernate.Transactioninstances. It maintains a generally "repeatable read" persistence context (first level cache) of the application domain model. - Transaction (

org.hibernate.Transaction) -

A single-threaded, short-lived object used by the application to demarcate individual physical transaction boundaries.

EntityTransactionis the Jakarta Persistence equivalent and both act as an abstraction API to isolate the application from the underlying transaction system in use (JDBC or JTA).

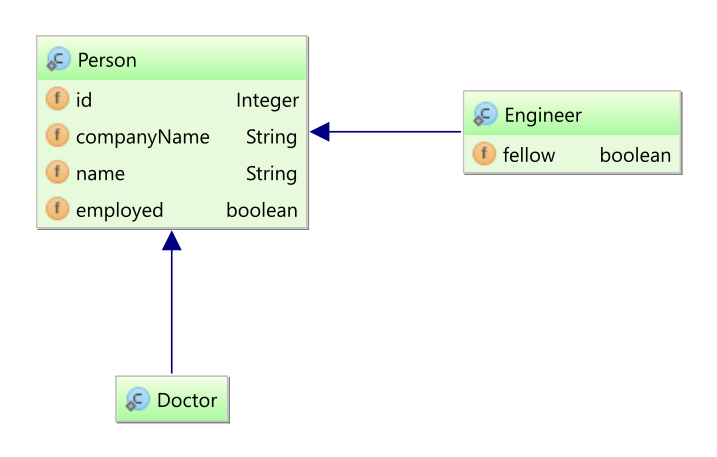

3. Domain Model

The term domain model comes from the realm of data modeling. It is the model that ultimately describes the problem domain you are working in. Sometimes you will also hear the term persistent classes.

Ultimately the application domain model is the central character in an ORM.

They make up the classes you wish to map. Hibernate works best if these classes follow the Plain Old Java Object (POJO) / JavaBean programming model.

However, none of these rules are hard requirements.

Indeed, Hibernate assumes very little about the nature of your persistent objects. You can express a domain model in other ways (using trees of java.util.Map instances, for example).

Historically applications using Hibernate would have used its proprietary XML mapping file format for this purpose. With the coming of Jakarta Persistence, most of this information is now defined in a way that is portable across ORM/Jakarta Persistence providers using annotations (and/or standardized XML format). This chapter will focus on Jakarta Persistence mapping where possible. For Hibernate mapping features not supported by Jakarta Persistence we will prefer Hibernate extension annotations.

|

This chapter mostly uses "implicit naming" for table names, column names, etc. For details on adjusting these names see Naming strategies. |

3.1. Mapping types

Hibernate understands both the Java and JDBC representations of application data.

The ability to read/write this data from/to the database is the function of a Hibernate type.

A type, in this usage, is an implementation of the org.hibernate.type.Type interface.

This Hibernate type also describes various behavioral aspects of the Java type such as how to check for equality, how to clone values, etc.

|

Usage of the word type

The Hibernate type is neither a Java type nor a SQL data type. It provides information about mapping a Java type to an SQL type as well as how to persist and fetch a given Java type to and from a relational database. When you encounter the term type in discussions of Hibernate, it may refer to the Java type, the JDBC type, or the Hibernate type, depending on the context. |

To help understand the type categorizations, let’s look at a simple table and domain model that we wish to map.

create table Contact (

id integer not null,

first varchar(255),

last varchar(255),

middle varchar(255),

notes varchar(255),

starred boolean not null,

website varchar(255),

primary key (id)

)@Entity(name = "Contact")

public static class Contact {

@Id

private Integer id;

private Name name;

private String notes;

private URL website;

private boolean starred;

//Getters and setters are omitted for brevity

}

@Embeddable

public class Name {

private String firstName;

private String middleName;

private String lastName;

// getters and setters omitted

}In the broadest sense, Hibernate categorizes types into two groups:

3.1.1. Value types

A value type is a piece of data that does not define its own lifecycle. It is, in effect, owned by an entity, which defines its lifecycle.

Looked at another way, all the state of an entity is made up entirely of value types.

These state fields or JavaBean properties are termed persistent attributes.

The persistent attributes of the Contact class are value types.

Value types are further classified into three sub-categories:

- Basic types

-

in mapping the

Contacttable, all attributes except for name would be basic types. Basic types are discussed in detail in Basic types - Embeddable types

-

the

nameattribute is an example of an embeddable type, which is discussed in details in Embeddable types - Collection types

-

although not featured in the aforementioned example, collection types are also a distinct category among value types. Collection types are further discussed in Collections

3.1.2. Entity types

Entities, by nature of their unique identifier, exist independently of other objects whereas values do not.

Entities are domain model classes which correlate to rows in a database table, using a unique identifier.

Because of the requirement for a unique identifier, entities exist independently and define their own lifecycle.

The Contact class itself would be an example of an entity.

Mapping entities is discussed in detail in Entity types.

3.2. Basic values

A basic type is a mapping between a Java type and a single database column.

Hibernate can map many standard Java types (Integer, String, etc.) as basic

types. The mapping for many come from tables B-3 and B-4 in the JDBC specification[jdbc].

Others (URL as VARCHAR, e.g.) simply make sense.

Additionally, Hibernate provides multiple, flexible ways to indicate how the Java type should be mapped to the database.

|

The Jakarta Persistence specification strictly limits the Java types that can be marked as basic to the following:

If provider portability is a concern, you should stick to just these basic types. Java Persistence 2.1 introduced the |

3.2.1. @Basic

Strictly speaking, a basic type is denoted by the jakarta.persistence.Basic annotation.

Generally, the @Basic annotation can be ignored as it is assumed by default. Both of the following

examples are ultimately the same.

@Basic explicit@Entity(name = "Product")

public class Product {

@Id

@Basic

private Integer id;

@Basic

private String sku;

@Basic

private String name;

@Basic

private String description;

}@Basic implied@Entity(name = "Product")

public class Product {

@Id

private Integer id;

private String sku;

private String name;

private String description;

}The @Basic annotation defines 2 attributes.

optional- boolean (defaults to true)-

Defines whether this attribute allows nulls. Jakarta Persistence defines this as "a hint", which means the provider is free to ignore it. Jakarta Persistence also says that it will be ignored if the type is primitive. As long as the type is not primitive, Hibernate will honor this value. Works in conjunction with

@Column#nullable- see @Column. fetch- FetchType (defaults to EAGER)-

Defines whether this attribute should be fetched eagerly or lazily.

EAGERindicates that the value will be fetched as part of loading the owner.LAZYvalues are fetched only when the value is accessed. Jakarta Persistence requires providers to supportEAGER, while support forLAZYis optional meaning that a provider is free to not support it. Hibernate supports lazy loading of basic values as long as you are using its bytecode enhancement support.

3.2.2. @Column

Jakarta Persistence defines rules for implicitly determining the name of tables and columns. For a detailed discussion of implicit naming see Naming strategies.

For basic type attributes, the implicit naming rule is that the column name is the same as the attribute name. If that implicit naming rule does not meet your requirements, you can explicitly tell Hibernate (and other providers) the column name to use.

@Entity(name = "Product")

public class Product {

@Id

private Integer id;

private String sku;

private String name;

@Column(name = "NOTES")

private String description;

}Here we use @Column to explicitly map the description attribute to the NOTES column, as opposed to the

implicit column name description. See Naming strategies for additional details.

The @Column annotation defines other mapping information as well. See its Javadocs for details.

3.2.3. @Formula

@Formula allows mapping any database computed value as a virtual read-only column.

|

@Formula mapping usage@Entity(name = "Account")

public static class Account {

@Id

private Long id;

private Double credit;

private Double rate;

@Formula(value = "credit * rate")

private Double interest;

//Getters and setters omitted for brevity

}When loading the Account entity, Hibernate is going to calculate the interest property using the configured @Formula:

@Formula mappingdoInJPA(this::entityManagerFactory, entityManager -> {

Account account = new Account();

account.setId(1L);

account.setCredit(5000d);

account.setRate(1.25 / 100);

entityManager.persist(account);

});

doInJPA(this::entityManagerFactory, entityManager -> {

Account account = entityManager.find(Account.class, 1L);

assertEquals(Double.valueOf(62.5d), account.getInterest());

});INSERT INTO Account (credit, rate, id)

VALUES (5000.0, 0.0125, 1)

SELECT

a.id as id1_0_0_,

a.credit as credit2_0_0_,

a.rate as rate3_0_0_,

a.credit * a.rate as formula0_0_

FROM

Account a

WHERE

a.id = 1|

The SQL fragment defined by the |

3.2.4. Mapping basic values

To deal with values of basic type, Hibernate needs to understand a few things about the mapping:

-

The capabilities of the Java type. For example:

-

How to compare values

-

How to calculate a hash-code

-

How to coerce values of this type to another type

-

-

The JDBC type it should use

-

How to bind values to JDBC statements

-

How to extract from JDBC results

-

-

Any conversion it should perform on the value to/from the database

-

The mutability of the value - whether the internal state can change like

java.util.Dateor is immutable likejava.lang.String

This section covers how Hibernate determines these pieces and how to influence that determination process.

|

The following sections focus on approaches introduced in version 6 to influence how Hibernate will map basic value to the database. This includes removal of the following deprecated legacy annotations:

See the 6.0 migration guide for discussions about migrating uses of these annotations The new annotations added as part of 6.0 support composing mappings in annotations through "meta-annotations". |

Looking at this example, how does Hibernate know what mapping to use for these attributes? The annotations do not really provide much information.

This is an illustration of Hibernate’s implicit basic-type resolution, which is a series of checks to determine the appropriate mapping to use. Describing the complete process for implicit resolution is beyond the scope of this documentation[2].

This is primarily driven by the Java type defined for the basic type, which can generally be determined through reflection. Is the Java type an enum? Is it temporal? These answers can indicate certain mappings be used.

The fallback is to map the value to the "recommended" JDBC type.

Worst case, if the Java type is Serializable Hibernate will try to handle it via binary serialization.

For cases where the Java type is not a standard type or if some specialized handling is desired, Hibernate provides 2 main approaches to influence this mapping resolution:

-

A compositional approach using a combination of one-or-more annotations to describe specific aspects of the mapping. This approach is covered in Compositional basic mapping.

-

The

UserTypecontract, which is covered in Custom type mapping

These 2 approaches should be considered mutually exclusive. A custom UserType will always take precedence over compositional annotations.

The next few sections look at common, standard Java types and discusses various ways to map them.

See Case Study : BitSet for examples of mapping BitSet as a basic type using all of these approaches.

3.2.5. Enums

Hibernate supports the mapping of Java enums as basic value types in a number of different ways.

@Enumerated

The original Jakarta Persistence-compliant way to map enums was via the @Enumerated or @MapKeyEnumerated

annotations, working on the principle that the enum values are stored according to one of 2 strategies indicated

by jakarta.persistence.EnumType:

ORDINAL-

stored according to the enum value’s ordinal position within the enum class, as indicated by

java.lang.Enum#ordinal STRING-

stored according to the enum value’s name, as indicated by

java.lang.Enum#name

Assuming the following enumeration:

PhoneType enumerationpublic enum PhoneType {

LAND_LINE,

MOBILE;

}In the ORDINAL example, the phone_type column is defined as a (nullable) INTEGER type and would hold:

NULL-

For null values

0-

For the

LAND_LINEenum 1-

For the

MOBILEenum

@Enumerated(ORDINAL) example@Entity(name = "Phone")

public static class Phone {

@Id

private Long id;

@Column(name = "phone_number")

private String number;

@Enumerated(EnumType.ORDINAL)

@Column(name = "phone_type")

private PhoneType type;

//Getters and setters are omitted for brevity

}When persisting this entity, Hibernate generates the following SQL statement:

@Enumerated(ORDINAL) mappingPhone phone = new Phone();

phone.setId(1L);

phone.setNumber("123-456-78990");

phone.setType(PhoneType.MOBILE);

entityManager.persist(phone);INSERT INTO Phone (phone_number, phone_type, id)

VALUES ('123-456-78990', 1, 1)In the STRING example, the phone_type column is defined as a (nullable) VARCHAR type and would hold:

NULL-

For null values

LAND_LINE-

For the

LAND_LINEenum MOBILE-

For the

MOBILEenum

@Enumerated(STRING) example@Entity(name = "Phone")

public static class Phone {

@Id

private Long id;

@Column(name = "phone_number")

private String number;

@Enumerated(EnumType.STRING)

@Column(name = "phone_type")

private PhoneType type;

//Getters and setters are omitted for brevity

}Persisting the same entity as in the @Enumerated(ORDINAL) example, Hibernate generates the following SQL statement:

@Enumerated(STRING) mappingINSERT INTO Phone (phone_number, phone_type, id)

VALUES ('123-456-78990', 'MOBILE', 1)Using AttributeConverter

Let’s consider the following Gender enum which stores its values using the 'M' and 'F' codes.

public enum Gender {

MALE('M'),

FEMALE('F');

private final char code;

Gender(char code) {

this.code = code;

}

public static Gender fromCode(char code) {

if (code == 'M' || code == 'm') {

return MALE;

}

if (code == 'F' || code == 'f') {

return FEMALE;

}

throw new UnsupportedOperationException(

"The code " + code + " is not supported!"

);

}

public char getCode() {

return code;

}

}You can map enums in a Jakarta Persistence compliant way using a Jakarta Persistence AttributeConverter.

AttributeConverter example@Entity(name = "Person")

public static class Person {

@Id

private Long id;

private String name;

@Convert(converter = GenderConverter.class)

public Gender gender;

//Getters and setters are omitted for brevity

}

@Converter

public static class GenderConverter

implements AttributeConverter<Gender, Character> {

public Character convertToDatabaseColumn(Gender value) {

if (value == null) {

return null;

}

return value.getCode();

}

public Gender convertToEntityAttribute(Character value) {

if (value == null) {

return null;

}

return Gender.fromCode(value);

}

}Here, the gender column is defined as a CHAR type and would hold:

NULL-

For null values

'M'-

For the

MALEenum 'F'-

For the

FEMALEenum

For additional details on using AttributeConverters, see AttributeConverters section.

|

Jakarta Persistence explicitly disallows the use of an So, when using the |

Custom type

You can also map enums using a Hibernate custom type mapping.

Let’s again revisit the Gender enum example, this time using a custom Type to store the more standardized 'M' and 'F' codes.

@Entity(name = "Person")

public static class Person {

@Id

private Long id;

private String name;

@Type(GenderType.class)

@Column(length = 6)

public Gender gender;

//Getters and setters are omitted for brevity

}

public class GenderType extends UserTypeSupport<Gender> {

public GenderType() {

super(Gender.class, Types.CHAR);

}

}

public class GenderJavaType extends AbstractClassJavaType<Gender> {

public static final GenderJavaType INSTANCE =

new GenderJavaType();

protected GenderJavaType() {

super(Gender.class);

}

public String toString(Gender value) {

return value == null ? null : value.name();

}

public Gender fromString(CharSequence string) {

return string == null ? null : Gender.valueOf(string.toString());

}

public <X> X unwrap(Gender value, Class<X> type, WrapperOptions options) {

return CharacterJavaType.INSTANCE.unwrap(

value == null ? null : value.getCode(),

type,

options

);

}

public <X> Gender wrap(X value, WrapperOptions options) {

return Gender.fromCode(

CharacterJavaType.INSTANCE.wrap( value, options)

);

}

}Again, the gender column is defined as a CHAR type and would hold:

NULL-

For null values

'M'-

For the

MALEenum 'F'-

For the

FEMALEenum

For additional details on using custom types, see Custom type mapping section.

3.2.6. Boolean

By default, Boolean attributes map to BOOLEAN columns, at least when the database has a

dedicated BOOLEAN type. On databases which don’t, Hibernate uses whatever else is available:

BIT, TINYINT, or SMALLINT.

// this will be mapped to BIT or BOOLEAN on the database

@Basic

boolean implicit;However, it is quite common to find boolean values encoded as a character or as an integer.

Such cases are exactly the intention of AttributeConverter. For convenience, Hibernate

provides 3 built-in converters for the common boolean mapping cases:

-

YesNoConverterencodes a boolean value as'Y'or'N', -

TrueFalseConverterencodes a boolean value as'T'or'F', and -

NumericBooleanConverterencodes the value as an integer,1for true, and0for false.

AttributeConverter// this will get mapped to CHAR or NCHAR with a conversion

@Basic

@Convert(converter = org.hibernate.type.YesNoConverter.class)

boolean convertedYesNo;

// this will get mapped to CHAR or NCHAR with a conversion

@Basic

@Convert(converter = org.hibernate.type.TrueFalseConverter.class)

boolean convertedTrueFalse;

// this will get mapped to TINYINT with a conversion

@Basic

@Convert(converter = org.hibernate.type.NumericBooleanConverter.class)

boolean convertedNumeric;If the boolean value is defined in the database as something other than BOOLEAN, character or integer,

the value can also be mapped using a custom AttributeConverter - see AttributeConverters.

A UserType may also be used - see Custom type mapping

3.2.7. Byte

By default, Hibernate maps values of Byte / byte to the TINYINT JDBC type.

// these will both be mapped using TINYINT

Byte wrapper;

byte primitive;See Byte array for mapping arrays of bytes.

3.2.8. Short

By default, Hibernate maps values of Short / short to the SMALLINT JDBC type.

// these will both be mapped using SMALLINT

Short wrapper;

short primitive;3.2.9. Integer

By default, Hibernate maps values of Integer / int to the INTEGER JDBC type.

// these will both be mapped using INTEGER

Integer wrapper;

int primitive;3.2.10. Long

By default, Hibernate maps values of Long / long to the BIGINT JDBC type.

// these will both be mapped using BIGINT

Long wrapper;

long primitive;3.2.11. BigInteger

By default, Hibernate maps values of BigInteger to the NUMERIC JDBC type.

// will be mapped using NUMERIC

BigInteger wrapper;3.2.12. Double

By default, Hibernate maps values of Double to the DOUBLE, FLOAT, REAL or

NUMERIC JDBC type depending on the capabilities of the database

// these will be mapped using DOUBLE, FLOAT, REAL or NUMERIC

// depending on the capabilities of the database

Double wrapper;

double primitive;A specific type can be influenced using any of the JDBC type influencers covered in JdbcType section.

If @JdbcTypeCode is used, the Dialect is still consulted to make sure the database

supports the requested type. If not, an appropriate type is selected

3.2.13. Float

By default, Hibernate maps values of Float to the FLOAT, REAL or

NUMERIC JDBC type depending on the capabilities of the database.

// these will be mapped using FLOAT, REAL or NUMERIC

// depending on the capabilities of the database

Float wrapper;

float primitive;A specific type can be influenced using any of the JDBC type influencers covered in Mapping basic values section.

If @JdbcTypeCode is used, the Dialect is still consulted to make sure the database

supports the requested type. If not, an appropriate type is selected

3.2.14. BigDecimal

By default, Hibernate maps values of BigDecimal to the NUMERIC JDBC type.

// will be mapped using NUMERIC

BigDecimal wrapper;3.2.15. Character

By default, Hibernate maps Character to the CHAR JDBC type.

// these will be mapped using CHAR

Character wrapper;

char primitive;3.2.16. String

By default, Hibernate maps String to the VARCHAR JDBC type.

// will be mapped using VARCHAR

String string;

// will be mapped using CLOB

@Lob

String clobString;Optionally, you may specify the maximum length of the string using @Column(length=…),

or using the @Size annotation from Hibernate Validator.

For very large strings, you can use one of the constant values defined by the class

org.hibernate.Length, for example:

@Column(length=Length.LONG)

private String text;Alternatively, you may explicitly specify the JDBC type LONGVARCHAR, which is treated

as a VARCHAR mapping with default length=Length.LONG when no length is explicitly

specified:

@JdbcTypeCode(Types.LONGVARCHAR)

private String text;If you use Hibernate for schema generation, Hibernate will generate DDL with a column type that is large enough to accommodate the maximum length you’ve specified.

|

If the maximum length you specify is too long to fit in the largest |

See Handling LOB data for details on mapping to a database CLOB.

For databases which support nationalized character sets, you can also store strings as nationalized data.

// will be mapped using NVARCHAR

@Nationalized

String nstring;

// will be mapped using NCLOB

@Lob

@Nationalized

String nclobString;See Handling nationalized character data for details on mapping strings using nationalized character sets.

3.2.17. Character arrays

By default, Hibernate maps char[] to the VARCHAR JDBC type.

Since Character[] can contain null elements, it is mapped as basic array type instead.

Prior to Hibernate 6.2, also Character[] mapped to VARCHAR, yet disallowed null elements.

To continue mapping Character[] to the VARCHAR JDBC type, or for LOBs mapping to the CLOB JDBC type,

it is necessary to annotate the persistent attribute with @JavaType( CharacterArrayJavaType.class ).

// mapped as VARCHAR

char[] primitive;

Character[] wrapper;

@JavaType( CharacterArrayJavaType.class )

Character[] wrapperOld;

// mapped as CLOB

@Lob

char[] primitiveClob;

@Lob

Character[] wrapperClob;See Handling LOB data for details on mapping as database LOB.

For databases which support nationalized character sets, you can also store character arrays as nationalized data.

// mapped as NVARCHAR

@Nationalized

char[] primitiveNVarchar;

@Nationalized

Character[] wrapperNVarchar;

@Nationalized

@JavaType( CharacterArrayJavaType.class )

Character[] wrapperNVarcharOld;

// mapped as NCLOB

@Lob

@Nationalized

char[] primitiveNClob;

@Lob

@Nationalized

Character[] wrapperNClob;See Handling nationalized character data for details on mapping strings using nationalized character sets.

3.2.18. Clob / NClob

|

Be sure to check out Handling LOB data which covers basics of LOB handling and Handling nationalized character data which covers basics of nationalized data handling. |

By default, Hibernate will map the java.sql.Clob Java type to CLOB and java.sql.NClob to NCLOB.

Considering we have the following database table:

CREATE TABLE Product (

id INTEGER NOT NULL,

name VARCHAR(255),

warranty CLOB,

PRIMARY KEY (id)

)Let’s first map this using the @Lob Jakarta Persistence annotation and the java.sql.Clob type:

CLOB mapped to java.sql.Clob@Entity(name = "Product")

public static class Product {

@Id

private Integer id;

private String name;

@Lob

private Clob warranty;

//Getters and setters are omitted for brevity

}To persist such an entity, you have to create a Clob using the ClobProxy Hibernate utility:

java.sql.Clob entityString warranty = "My product warranty";

final Product product = new Product();

product.setId(1);

product.setName("Mobile phone");

product.setWarranty(ClobProxy.generateProxy(warranty));

entityManager.persist(product);To retrieve the Clob content, you need to transform the underlying java.io.Reader:

java.sql.Clob entityProduct product = entityManager.find(Product.class, productId);

try (Reader reader = product.getWarranty().getCharacterStream()) {

assertEquals("My product warranty", toString(reader));

}We could also map the CLOB in a materialized form. This way, we can either use a String or a char[].

CLOB mapped to String@Entity(name = "Product")

public static class Product {

@Id

private Integer id;

private String name;

@Lob

private String warranty;

//Getters and setters are omitted for brevity

}We might even want the materialized data as a char array.

char[] mapping@Entity(name = "Product")

public static class Product {

@Id

private Integer id;

private String name;

@Lob

private char[] warranty;

//Getters and setters are omitted for brevity

}Just like with CLOB, Hibernate can also deal with NCLOB SQL data types:

NCLOB - SQLCREATE TABLE Product (

id INTEGER NOT NULL ,

name VARCHAR(255) ,

warranty nclob ,

PRIMARY KEY ( id )

)Hibernate can map the NCLOB to a java.sql.NClob

NCLOB mapped to java.sql.NClob@Entity(name = "Product")

public static class Product {

@Id

private Integer id;

private String name;

@Lob

@Nationalized

// Clob also works, because NClob extends Clob.

// The database type is still NCLOB either way and handled as such.

private NClob warranty;

//Getters and setters are omitted for brevity

}To persist such an entity, you have to create an NClob using the NClobProxy Hibernate utility:

java.sql.NClob entityString warranty = "My product warranty";

final Product product = new Product();

product.setId(1);

product.setName("Mobile phone");

product.setWarranty(NClobProxy.generateProxy(warranty));

entityManager.persist(product);To retrieve the NClob content, you need to transform the underlying java.io.Reader:

java.sql.NClob entityProduct product = entityManager.find(Product.class, 1);

try (Reader reader = product.getWarranty().getCharacterStream()) {

assertEquals("My product warranty", toString(reader));

}We could also map the NCLOB in a materialized form. This way, we can either use a String or a char[].

NCLOB mapped to String@Entity(name = "Product")

public static class Product {

@Id

private Integer id;

private String name;

@Lob

@Nationalized

private String warranty;

//Getters and setters are omitted for brevity

}We might even want the materialized data as a char array.

char[] mapping@Entity(name = "Product")

public static class Product {

@Id

private Integer id;

private String name;

@Lob

@Nationalized

private char[] warranty;

//Getters and setters are omitted for brevity

}3.2.19. Byte array

By default, Hibernate maps byte[] to the VARBINARY JDBC type.

Since Byte[] can contain null elements, it is mapped as basic array type instead.

Prior to Hibernate 6.2, also Byte[] mapped to VARBINARY, yet disallowed null elements.

To continue mapping Byte[] to the VARBINARY JDBC type, or for LOBs mapping to the BLOB JDBC type,

it is necessary to annotate the persistent attribute with @JavaType( ByteArrayJavaType.class ).

// mapped as VARBINARY

private byte[] primitive;

private Byte[] wrapper;

@JavaType( ByteArrayJavaType.class )

private Byte[] wrapperOld;

// mapped as (materialized) BLOB

@Lob

private byte[] primitiveLob;

@Lob

private Byte[] wrapperLob;Just like with strings, you may specify the maximum length using @Column(length=…)

or the @Size annotation from Hibernate Validator.

For very large arrays, you can use the constants defined by org.hibernate.Length.

Alternatively @JdbcTypeCode(Types.LONGVARBINARY) is treated as a VARBINARY mapping

with default length=Length.LONG when no length is explicitly specified.

If you use Hibernate for schema generation, Hibernate will generate DDL with a column type that is large enough to accommodate the maximum length you’ve specified.

|

If the maximum length you specify is too long to fit in the largest |

See Handling LOB data for details on mapping to a database BLOB.

3.2.20. Blob

|

Be sure to check out Handling LOB data which covers basics of LOB handling. |

By default, Hibernate will map the java.sql.Blob Java type to BLOB.

Considering we have the following database table:

CREATE TABLE Product (

id INTEGER NOT NULL ,

image blob ,

name VARCHAR(255) ,

PRIMARY KEY ( id )

)Let’s first map this using the JDBC java.sql.Blob type.

BLOB mapped to java.sql.Blob@Entity(name = "Product")

public static class Product {

@Id

private Integer id;

private String name;

@Lob

private Blob image;

//Getters and setters are omitted for brevity

}To persist such an entity, you have to create a Blob using the BlobProxy Hibernate utility:

java.sql.Blob entitybyte[] image = new byte[] {1, 2, 3};

final Product product = new Product();

product.setId(1);

product.setName("Mobile phone");

product.setImage(BlobProxy.generateProxy(image));

entityManager.persist(product);To retrieve the Blob content, you need to transform the underlying java.io.InputStream:

java.sql.Blob entityProduct product = entityManager.find(Product.class, productId);

try (InputStream inputStream = product.getImage().getBinaryStream()) {

assertArrayEquals(new byte[] {1, 2, 3}, toBytes(inputStream));

}We could also map the BLOB in a materialized form (e.g. byte[]).

BLOB mapped to byte[]@Entity(name = "Product")

public static class Product {

@Id

private Integer id;

private String name;

@Lob

private byte[] image;

//Getters and setters are omitted for brevity

}3.2.21. Duration

By default, Hibernate maps Duration to the NUMERIC SQL type.

It’s possible to map Duration to the INTERVAL_SECOND SQL type using @JdbcTypeCode(INTERVAL_SECOND) or by setting hibernate.type.preferred_duration_jdbc_type=INTERVAL_SECOND

|

private Duration duration;3.2.22. Instant

Instant is mapped to the TIMESTAMP_UTC SQL type.

// mapped as TIMESTAMP

private Instant instant;See Handling temporal data for basics of temporal mapping

3.2.23. LocalDate

LocalDate is mapped to the DATE JDBC type.

// mapped as DATE

private LocalDate localDate;See Handling temporal data for basics of temporal mapping

3.2.24. LocalDateTime

LocalDateTime is mapped to the TIMESTAMP JDBC type.

// mapped as TIMESTAMP

private LocalDateTime localDateTime;See Handling temporal data for basics of temporal mapping

3.2.25. LocalTime

LocalTime is mapped to the TIME JDBC type.

// mapped as TIME

private LocalTime localTime;See Handling temporal data for basics of temporal mapping

3.2.26. OffsetDateTime

OffsetDateTime is mapped to the TIMESTAMP or TIMESTAMP_WITH_TIMEZONE JDBC type

depending on the database.

// mapped as TIMESTAMP or TIMESTAMP_WITH_TIMEZONE

private OffsetDateTime offsetDateTime;See Handling temporal data for basics of temporal mapping See Using a specific time zone for basics of time-zone handling

3.2.27. OffsetTime

OffsetTime is mapped to the TIME or TIME_WITH_TIMEZONE JDBC type

depending on the database.

// mapped as TIME or TIME_WITH_TIMEZONE

private OffsetTime offsetTime;See Handling temporal data for basics of temporal mapping See Using a specific time zone for basics of time-zone handling

3.2.28. TimeZone

TimeZone is mapped to VARCHAR JDBC type.

// mapped as VARCHAR

private TimeZone timeZone;3.2.29. ZonedDateTime

ZonedDateTime is mapped to the TIMESTAMP or TIMESTAMP_WITH_TIMEZONE JDBC type

depending on the database.

// mapped as TIMESTAMP or TIMESTAMP_WITH_TIMEZONE

private ZonedDateTime zonedDateTime;See Handling temporal data for basics of temporal mapping See Using a specific time zone for basics of time-zone handling

3.2.30. ZoneOffset

ZoneOffset is mapped to VARCHAR JDBC type.

// mapped as VARCHAR

private ZoneOffset zoneOffset;3.2.31. Calendar

See Handling temporal data for basics of temporal mapping See Using a specific time zone for basics of time-zone handling

3.2.32. Date

See Handling temporal data for basics of temporal mapping See Using a specific time zone for basics of time-zone handling

3.2.33. Time

See Handling temporal data for basics of temporal mapping See Using a specific time zone for basics of time-zone handling

3.2.34. Timestamp

See Handling temporal data for basics of temporal mapping See Using a specific time zone for basics of time-zone handling

3.2.35. Class

Hibernate maps Class references to VARCHAR JDBC type

// mapped as VARCHAR

private Class<?> clazz;3.2.36. Currency

Hibernate maps Currency references to VARCHAR JDBC type

// mapped as VARCHAR

private Currency currency;3.2.37. Locale

Hibernate maps Locale references to VARCHAR JDBC type

// mapped as VARCHAR

private Locale locale;3.2.38. UUID

Hibernate allows mapping UUID values in a number of ways. By default, Hibernate will

store UUID values in the native form by using the SQL type UUID or in binary form with the BINARY JDBC type

if the database does not have a native UUID type.

|

The default uses the binary representation because it uses a more efficient column storage. However, many applications prefer the readability of the character-based column storage. To switch the default mapping, set the |

UUID as binary

As mentioned, the default mapping for UUID attributes.

Maps the UUID to a byte[] using java.util.UUID#getMostSignificantBits and java.util.UUID#getLeastSignificantBits and stores that as BINARY data.

Chosen as the default simply because it is generally more efficient from a storage perspective.

UUID as (var)char

Maps the UUID to a String using java.util.UUID#toString and java.util.UUID#fromString and stores that as CHAR or VARCHAR data.

UUID as identifier

Hibernate supports using UUID values as identifiers, and they can even be generated on the user’s behalf. For details, see the discussion of generators in Identifiers.

3.2.39. InetAddress

By default, Hibernate will map InetAddress to the INET SQL type and fallback to BINARY if necessary.

private InetAddress address;3.2.40. JSON mapping

Hibernate will only use the JSON type if explicitly configured through @JdbcTypeCode( SqlTypes.JSON ).

The JSON library used for serialization/deserialization is detected automatically,

but can be overridden by setting hibernate.type.json_format_mapper

as can be read in the Configurations section.

@JdbcTypeCode( SqlTypes.JSON )

private Map<String, String> stringMap;3.2.41. XML mapping

Hibernate will only use the XML type if explicitly configured through @JdbcTypeCode( SqlTypes.SQLXML ).

The XML library used for serialization/deserialization is detected automatically,

but can be overridden by setting hibernate.type.xml_format_mapper

as can be read in the Configurations section.

@JdbcTypeCode( SqlTypes.SQLXML )

private Map<String, StringNode> stringMap;3.2.42. Basic array mapping

Basic arrays, other than byte[]/Byte[] and char[]/Character[], map to the type code SqlTypes.ARRAY by default,

which maps to the SQL standard array type if possible,

as determined via the new methods getArrayTypeName and supportsStandardArrays of org.hibernate.dialect.Dialect.

If SQL standard array types are not available, data will be modeled as SqlTypes.JSON, SqlTypes.XML or SqlTypes.VARBINARY,

depending on the database support as determined via the new method org.hibernate.dialect.Dialect.getPreferredSqlTypeCodeForArray.

Short[] wrapper;

short[] primitive;3.2.43. Basic collection mapping

Basic collections (only subtypes of Collection), which are not annotated with @ElementCollection,

map to the type code SqlTypes.ARRAY by default, which maps to the SQL standard array type if possible,

as determined via the new methods getArrayTypeName and supportsStandardArrays of org.hibernate.dialect.Dialect.

If SQL standard array types are not available, data will be modeled as SqlTypes.JSON, SqlTypes.XML or SqlTypes.VARBINARY,

depending on the database support as determined via the new method org.hibernate.dialect.Dialect.getPreferredSqlTypeCodeForArray.

List<Short> list;

SortedSet<Short> sortedSet;3.2.44. Compositional basic mapping

The compositional approach allows defining how the mapping should work in terms of influencing individual parts that make up a basic-value mapping. This section will look at these individual parts and the specifics of influencing each.

JavaType

Hibernate needs to understand certain aspects of the Java type to handle values properly and efficiently.

Hibernate understands these capabilities through its org.hibernate.type.descriptor.java.JavaType contract.

Hibernate provides built-in support for many JDK types (Integer, String, e.g.), but also supports the ability

for the application to change the handling for any of the standard JavaType registrations as well as

add in handling for non-standard types. Hibernate provides multiple ways for the application to influence

the JavaType descriptor to use.

The resolution can be influenced locally using the @JavaType annotation on a particular mapping. The

indicated descriptor will be used just for that mapping. There are also forms of @JavaType for influencing

the keys of a Map (@MapKeyJavaType), the index of a List or array (@ListIndexJavaType), the identifier

of an ID-BAG mapping (@CollectionIdJavaType) as well as the discriminator (@AnyDiscriminator) and

key (@AnyKeyJavaClass, @AnyKeyJavaType) of an ANY mapping.

The resolution can also be influenced globally by registering the appropriate JavaType descriptor with the

JavaTypeRegistry. This approach is able to both "override" the handling for certain Java types or

to register new types. See Registries for discussion of JavaTypeRegistry.

See Resolving the composition for a discussion of the process used to resolve the mapping composition.

JdbcType

Hibernate also needs to understand aspects of the JDBC type it should use (how it should bind values,

how it should extract values, etc.) which is the role of its org.hibernate.type.descriptor.jdbc.JdbcType

contract. Hibernate provides multiple ways for the application to influence the JdbcType descriptor to use.

Locally, the resolution can be influenced using either the @JdbcType or @JdbcTypeCode annotations. There

are also annotations for influencing the JdbcType in relation to Map keys (@MapKeyJdbcType, @MapKeyJdbcTypeCode),

the index of a List or array (@ListIndexJdbcType, @ListIndexJdbcTypeCode), the identifier of an ID-BAG mapping

(@CollectionIdJdbcType, @CollectionIdJdbcTypeCode) as well as the key of an ANY mapping (@AnyKeyJdbcType,

@AnyKeyJdbcTypeCode). The @JdbcType specifies a specific JdbcType implementation to use while @JdbcTypeCode

specifies a "code" that is then resolved against the JdbcTypeRegistry.

|

The "type code" relative to a |

Customizing the JdbcTypeRegistry can be accomplished through @JdbcTypeRegistration and

TypeContributor. See Registries for discussion of JavaTypeRegistry.

See TypeContributor for discussion of TypeContributor.

See the @JdbcTypeCode Javadoc for details.

See Resolving the composition for a discussion of the process used to resolve the mapping composition.

MutabilityPlan

MutabilityPlan is the means by which Hibernate understands how to deal with the domain value in terms

of its internal mutability as well as related concerns such as making copies. While it seems like a minor

concern, it can have a major impact on performance. See AttributeConverter Mutability Plan for one case where

this can manifest. See also Case Study : BitSet for another discussion.

The MutabilityPlan for a mapping can be influenced by any of the following annotations:

-

@Mutability -

@Immutable -

@MapKeyMutability -

@CollectionIdMutability

Hibernate checks the following places for @Mutability and @Immutable, in order of precedence:

-

Local to the mapping

-

On the associated

AttributeConverterimplementation class (if one) -

On the value’s Java type

In most cases, the fallback defined by JavaType#getMutabilityPlan is the proper strategy.

Hibernate uses MutabilityPlan to:

-

Check whether a value is considered dirty

-

Make deep copies

-

Marshal values to and from the second-level cache

Generally speaking, immutable values perform better in all of these cases

-

To check for dirtiness, Hibernate just needs to check object identity (

==) as opposed to equality (Object#equals). -

The same value instance can be used as the deep copy of itself.

-

The same value can be used from the second-level cache as well as the value we put into the second-level cache.

If a particular Java type is considered mutable (a Date e.g.), @Immutable or a immutable-specific

MutabilityPlan implementation can be specified to have Hibernate treat the value as immutable. This

also acts as a contract from the application that the internal state of these objects is not changed

by the application. Specifying that a mutable type is immutable and then changing the internal state

will lead to problems; so only do this if the application unequivocally does not change the internal

state.

See Resolving the composition for a discussion of the process used to resolve the mapping composition.

BasicValueConverter

BasicValueConverter is roughly analogous to AttributeConverter in that it describes a conversion to

happen when reading or writing values of a basic-valued model part. In fact, internally Hibernate wraps

an applied AttributeConverter in a BasicValueConverter. It also applies implicit BasicValueConverter

converters in certain cases such as enum handling, etc.

Hibernate does not provide an explicit facility to influence these conversions beyond AttributeConverter.

See AttributeConverters.

See Resolving the composition for a discussion of the process used to resolve the mapping composition.

Resolving the composition

Using this composition approach, Hibernate will need to resolve certain parts of this mapping. Often this involves "filling in the blanks" as it will be configured for just parts of the mapping. This section outlines how this resolution happens.

|

This is a complicated process and is only covered at a high level for the most common cases here. For the full specifics, consult the source code for |

First, we look for a custom type. If found, this takes predence. See Custom type mapping for details

If an AttributeConverter is applied, we use it as the basis for the resolution

-

If

@JavaTypeis also used, that specificJavaTypeis used for the converter’s "domain type". Otherwise, the Java type defined by the converter as its "domain type" is resolved against theJavaTypeRegistry -

If

@JdbcTypeor@JdbcTypeCodeis used, the indicatedJdbcTypeis used and the converted "relational Java type" is determined byJdbcType#getJdbcRecommendedJavaTypeMapping. Otherwise, the Java type defined by the converter as its relational type is used and theJdbcTypeis determined byJdbcType#getRecommendedJdbcType -

The

MutabilityPlancan be specified using@Mutabilityor@Immutableon theAttributeConverterimplementation, the basic value mapping or the Java type used as the domain-type. Otherwise,JdbcType#getJdbcRecommendedJavaTypeMappingfor the conversion’s domain-type is used to determine the mutability-plan.

Next we try to resolve the JavaType to use for the mapping. We check for an explicit @JavaType and use the specified

JavaType if found. Next any "implicit" indication is checked; for example, the index for a List has the implicit Java type

of Integer. Next, we use reflection if possible. If we are unable to determine the JavaType to use through the preceeding

steps, we try to resolve an explicitly specified JdbcType to use and, if found, use its

JdbcType#getJdbcRecommendedJavaTypeMapping as the mapping’s JavaType. If we are not able to determine the

JavaType by this point, an error is thrown.

The JavaType resolved earlier is then inspected for a number of special cases.

-

For enum values, we check for an explicit

@Enumeratedand create an enumeration mapping. Note that this resolution still uses any explicitJdbcTypeindicators -

For temporal values, we check for

@Temporaland create an enumeration mapping. Note that this resolution still uses any explicitJdbcTypeindicators; this includes@JdbcTypeand@JdbcTypeCode, as well as@TimeZoneStorageand@TimeZoneColumnif appropriate.

The fallback at this point is to use the JavaType and JdbcType determined in earlier steps to create a

JDBC-mapping (which encapsulates the JavaType and JdbcType) and combines it with the resolved MutabilityPlan

When using the compositional approach, there are other ways to influence the resolution as covered in Enums, Handling temporal data, Handling LOB data and Handling nationalized character data

See TypeContributor for an alternative to @JavaTypeRegistration and @JdbcTypeRegistration.

3.2.45. Custom type mapping

Another approach is to supply the implementation of the org.hibernate.usertype.UserType contract using @Type.

There are also corresponding, specialized forms of @Type for specific model parts:

-

When mapping a Map,

@Typedescribes the Map value while@MapKeyTypedescribe the Map key -

When mapping an id-bag,

@Typedescribes the elements while@CollectionIdTypedescribes the collection-id -

For other collection mappings,

@Typedescribes the elements -

For discriminated association mappings (

@Anyand@ManyToAny),@Typedescribes the discriminator value

@Type allows for more complex mapping concerns; but, AttributeConverter and

Compositional basic mapping should generally be preferred as simpler solutions

3.2.46. Handling nationalized character data

How nationalized character data is handled and stored depends on the underlying database.

Most databases support storing nationalized character data through the standardized SQL NCHAR, NVARCHAR, LONGNVARCHAR and NCLOB variants.

Others support storing nationalized data as part of CHAR, VARCHAR, LONGVARCHAR and CLOB. Generally these databases do not support NCHAR, NVARCHAR, LONGNVARCHAR and NCLOB, even as aliased types.

Ultimately Hibernate understands this through Dialect#getNationalizationSupport()

To ensure nationalized character data gets stored and accessed correctly, @Nationalized

can be used locally or hibernate.use_nationalized_character_data can be set globally.

|

|

|

For databases with no See also Handling LOB data regarding similar limitation for databases which do not support

explicit |

Considering we have the following database table:

NVARCHAR - SQLCREATE TABLE Product (

id INTEGER NOT NULL ,

name VARCHAR(255) ,

warranty NVARCHAR(255) ,

PRIMARY KEY ( id )

)To map a specific attribute to a nationalized variant data type, Hibernate defines the @Nationalized annotation.

NVARCHAR mapping@Entity(name = "Product")

public static class Product {

@Id

private Integer id;

private String name;

@Nationalized

private String warranty;

//Getters and setters are omitted for brevity

}3.2.47. Handling LOB data

The @Lob annotation specifies that character or binary data should be written to the database

using the special JDBC APIs for handling database LOB (Large OBject) types.

|

How JDBC deals with Some database drivers (i.e. PostgreSQL) are especially problematic and in such cases you might have to do some extra work to get LOBs functioning. But that’s beyond the scope of this guide. |

|

For databases with no |

There’s two ways a LOB may be represented in the Java domain model:

-

using a special JDBC-defined LOB locator type, or

-

using a regular "materialized" type like

String,char[], orbyte[].

LOB Locator

The JDBC LOB locator types are:

-

java.sql.Blob -

java.sql.Clob -

java.sql.NClob

These types represent references to off-table LOB data. In principle, they allow JDBC drivers to support more efficient access to the LOB data. Some drivers stream parts of the LOB data as needed, potentially consuming less memory.

However, java.sql.Blob and java.sql.Clob can be unnatural to deal with and suffer

certain limitations.

For example, it’s not portable to access a LOB locator after the end of the transaction

in which it was obtained.

Materialized LOB

Alternatively, Hibernate lets you access LOB data via the familiar Java types String,

char[], and byte[]. But of course this requires materializing the entire contents

of the LOB in memory when the object is first retrieved. Whether this performance cost

is acceptable depends on many factors, including the vagaries of the JDBC driver.

|

You don’t need to use a |

3.2.48. Handling temporal data

Hibernate supports mapping temporal values in numerous ways, though ultimately these strategies boil down to the 3 main Date/Time types defined by the SQL specification:

- DATE

-

Represents a calendar date by storing years, months and days.

- TIME

-

Represents the time of a day by storing hours, minutes and seconds.

- TIMESTAMP

-

Represents both a DATE and a TIME plus nanoseconds.

- TIMESTAMP WITH TIME ZONE

-

Represents both a DATE and a TIME plus nanoseconds and zone id or offset.

The mapping of java.time temporal types to the specific SQL Date/Time types is implied as follows:

- DATE

-

java.time.LocalDate - TIME

-

java.time.LocalTime,java.time.OffsetTime - TIMESTAMP

-

java.time.Instant,java.time.LocalDateTime,java.time.OffsetDateTimeandjava.time.ZonedDateTime - TIMESTAMP WITH TIME ZONE

-

java.time.OffsetDateTime,java.time.ZonedDateTime

Although Hibernate recommends the use of the java.time package for representing temporal values,

it does support using java.sql.Date, java.sql.Time, java.sql.Timestamp, java.util.Date and

java.util.Calendar.

The mappings for java.sql.Date, java.sql.Time, java.sql.Timestamp are implicit:

- DATE

-

java.sql.Date - TIME

-

java.sql.Time - TIMESTAMP

-

java.sql.Timestamp

|

Applying |

When using java.util.Date or java.util.Calendar, Hibernate assumes TIMESTAMP. To alter that,

use @Temporal.

// mapped as TIMESTAMP by default

Date dateAsTimestamp;

// explicitly mapped as DATE

@Temporal(TemporalType.DATE)

Date dateAsDate;

// explicitly mapped as TIME

@Temporal(TemporalType.TIME)

Date dateAsTime;Using a specific time zone

By default, Hibernate is going to use the PreparedStatement.setTimestamp(int parameterIndex, java.sql.Timestamp) or

PreparedStatement.setTime(int parameterIndex, java.sql.Time x) when saving a java.sql.Timestamp or a java.sql.Time property.

When the time zone is not specified, the JDBC driver is going to use the underlying JVM default time zone, which might not be suitable if the application is used from all across the globe. For this reason, it is very common to use a single reference time zone (e.g. UTC) whenever saving/loading data from the database.

One alternative would be to configure all JVMs to use the reference time zone:

- Declaratively

-

java -Duser.timezone=UTC ... - Programmatically

-

TimeZone.setDefault( TimeZone.getTimeZone( "UTC" ) );

However, as explained in this article, this is not always practical, especially for front-end nodes.

For this reason, Hibernate offers the hibernate.jdbc.time_zone configuration property which can be configured:

- Declaratively, at the

SessionFactorylevel -

settings.put( AvailableSettings.JDBC_TIME_ZONE, TimeZone.getTimeZone( "UTC" ) ); - Programmatically, on a per

Sessionbasis -

Session session = sessionFactory() .withOptions() .jdbcTimeZone( TimeZone.getTimeZone( "UTC" ) ) .openSession();

With this configuration property in place, Hibernate is going to call the PreparedStatement.setTimestamp(int parameterIndex, java.sql.Timestamp, Calendar cal) or

PreparedStatement.setTime(int parameterIndex, java.sql.Time x, Calendar cal), where the java.util.Calendar references the time zone provided via the hibernate.jdbc.time_zone property.

Handling time zoned temporal data

By default, Hibernate will convert and normalize OffsetDateTime and ZonedDateTime to java.sql.Timestamp in UTC.

This behavior can be altered by configuring the hibernate.timezone.default_storage property

settings.put(

AvailableSettings.TIMEZONE_DEFAULT_STORAGE,

TimeZoneStorageType.AUTO

);Other possible storage types are AUTO, COLUMN, NATIVE and NORMALIZE (the default).

With COLUMN, Hibernate will save the time zone information into a dedicated column,

whereas NATIVE will require the support of database for a TIMESTAMP WITH TIME ZONE data type

that retains the time zone information.

NORMALIZE doesn’t store time zone information and will simply convert the timestamp to UTC.

Hibernate understands what a database/dialect supports through Dialect#getTimeZoneSupport

and will abort with a boot error if the NATIVE is used in conjunction with a database that doesn’t support this.

For AUTO, Hibernate tries to use NATIVE if possible and falls back to COLUMN otherwise.

3.2.49. @TimeZoneStorage

Hibernate supports defining the storage to use for time zone information for individual properties

via the @TimeZoneStorage and @TimeZoneColumn annotations.

The storage type can be specified via the @TimeZoneStorage by specifying a org.hibernate.annotations.TimeZoneStorageType.

The default storage type is AUTO which will ensure that the time zone information is retained.

The @TimeZoneColumn annotation can be used in conjunction with AUTO or COLUMN and allows to define

the column details for the time zone information storage.

|

Storing the zone offset might be problematic for future timestamps as zone rules can change.

Due to this, storing the offset is only safe for past timestamps, and we advise sticking to the |

@TimeZoneColumn usage@TimeZoneStorage(TimeZoneStorageType.COLUMN)

@TimeZoneColumn(name = "birthtime_offset_offset")

@Column(name = "birthtime_offset")

private OffsetTime offsetTimeColumn;

@TimeZoneStorage(TimeZoneStorageType.COLUMN)

@TimeZoneColumn(name = "birthday_offset_offset")

@Column(name = "birthday_offset")

private OffsetDateTime offsetDateTimeColumn;

@TimeZoneStorage(TimeZoneStorageType.COLUMN)

@TimeZoneColumn(name = "birthday_zoned_offset")

@Column(name = "birthday_zoned")

private ZonedDateTime zonedDateTimeColumn;3.2.50. AttributeConverters

With a custom AttributeConverter, the application developer can map a given JDBC type to an entity basic type.

In the following example, the java.time.Period is going to be mapped to a VARCHAR database column.

java.time.Period custom AttributeConverter@Converter

public class PeriodStringConverter

implements AttributeConverter<Period, String> {

@Override

public String convertToDatabaseColumn(Period attribute) {

return attribute.toString();

}

@Override

public Period convertToEntityAttribute(String dbData) {

return Period.parse(dbData);

}

}To make use of this custom converter, the @Convert annotation must decorate the entity attribute.

java.time.Period AttributeConverter mapping@Entity(name = "Event")

public static class Event {

@Id

@GeneratedValue

private Long id;

@Convert(converter = PeriodStringConverter.class)

@Column(columnDefinition = "")

private Period span;

//Getters and setters are omitted for brevity

}When persisting such entity, Hibernate will do the type conversion based on the AttributeConverter logic:

AttributeConverterINSERT INTO Event ( span, id )

VALUES ( 'P1Y2M3D', 1 )An AttributeConverter can be applied globally for (@Converter( autoApply=true )) or locally.

AttributeConverter Java and JDBC types

In cases when the Java type specified for the "database side" of the conversion (the second AttributeConverter bind parameter) is not known,

Hibernate will fallback to a java.io.Serializable type.

If the Java type is not known to Hibernate, you will encounter the following message:

HHH000481: Encountered Java type for which we could not locate a JavaType and which does not appear to implement equals and/or hashCode. This can lead to significant performance problems when performing equality/dirty checking involving this Java type. Consider registering a custom JavaType or at least implementing equals/hashCode.

A Java type is "known" if it has an entry in the JavaTypeRegistry. While Hibernate does load many JDK types into

the JavaTypeRegistry, an application can also expand the JavaTypeRegistry by adding new JavaType

entries as discussed in Compositional basic mapping and TypeContributor.

Mapping an AttributeConverter using HBM mappings

When using HBM mappings, you can still make use of the Jakarta Persistence AttributeConverter because Hibernate supports

such mapping via the type attribute as demonstrated by the following example.

Let’s consider we have an application-specific Money type:

Money typepublic class Money {

private long cents;

public Money(long cents) {

this.cents = cents;

}

public long getCents() {

return cents;

}

public void setCents(long cents) {

this.cents = cents;

}

}Now, we want to use the Money type when mapping the Account entity:

Account entity using the Money typepublic class Account {

private Long id;

private String owner;

private Money balance;

//Getters and setters are omitted for brevity

}Since Hibernate has no knowledge how to persist the Money type, we could use a Jakarta Persistence AttributeConverter

to transform the Money type as a Long. For this purpose, we are going to use the following

MoneyConverter utility:

MoneyConverter implementing the Jakarta Persistence AttributeConverter interfacepublic class MoneyConverter

implements AttributeConverter<Money, Long> {

@Override

public Long convertToDatabaseColumn(Money attribute) {

return attribute == null ? null : attribute.getCents();

}

@Override

public Money convertToEntityAttribute(Long dbData) {

return dbData == null ? null : new Money(dbData);

}

}To map the MoneyConverter using HBM configuration files you need to use the converted:: prefix in the type

attribute of the property element.

AttributeConverter<?xml version="1.0"?>

<!DOCTYPE hibernate-mapping PUBLIC

"-//Hibernate/Hibernate Mapping DTD 3.0//EN"

"http://www.hibernate.org/dtd/hibernate-mapping-3.0.dtd">

<hibernate-mapping package="org.hibernate.orm.test.mapping.converter.hbm">

<class name="org.hibernate.orm.test.mapping.converter.hbm.Account" table="account" >

<id name="id"/>

<property name="owner"/>

<property name="balance"

type="converted::org.hibernate.orm.test.mapping.converter.hbm.MoneyConverter"/>

</class>

</hibernate-mapping>AttributeConverter Mutability Plan

A basic type that’s converted by a Jakarta Persistence AttributeConverter is immutable if the underlying Java type is immutable

and is mutable if the associated attribute type is mutable as well.

Therefore, mutability is given by the JavaType#getMutabilityPlan

of the associated entity attribute type.

This can be adjusted by using @Immutable or @Mutability on any of:

-

the basic value

-

the

AttributeConverterclass -

the basic value type

See Mapping basic values for additional details.

Immutable types

If the entity attribute is a String, a primitive wrapper (e.g. Integer, Long), an Enum type, or any other immutable Object type,

then you can only change the entity attribute value by reassigning it to a new value.

Considering we have the same Period entity attribute as illustrated in the AttributeConverters section:

@Entity(name = "Event")

public static class Event {

@Id

@GeneratedValue

private Long id;

@Convert(converter = PeriodStringConverter.class)

@Column(columnDefinition = "")

private Period span;

//Getters and setters are omitted for brevity

}The only way to change the span attribute is to reassign it to a different value:

Event event = entityManager.createQuery("from Event", Event.class).getSingleResult();

event.setSpan(Period

.ofYears(3)

.plusMonths(2)

.plusDays(1)

);Mutable types

On the other hand, consider the following example where the Money type is a mutable.

public static class Money {

private long cents;

//Getters and setters are omitted for brevity

}

@Entity(name = "Account")

public static class Account {

@Id

private Long id;

private String owner;

@Convert(converter = MoneyConverter.class)

private Money balance;

//Getters and setters are omitted for brevity

}

public static class MoneyConverter

implements AttributeConverter<Money, Long> {

@Override

public Long convertToDatabaseColumn(Money attribute) {

return attribute == null ? null : attribute.getCents();

}

@Override

public Money convertToEntityAttribute(Long dbData) {

return dbData == null ? null : new Money(dbData);

}

}A mutable Object allows you to modify its internal structure, and Hibernate’s dirty checking mechanism is going to propagate the change to the database:

Account account = entityManager.find(Account.class, 1L);

account.getBalance().setCents(150 * 100L);

entityManager.persist(account);|

Although the For this reason, prefer immutable types over mutable ones whenever possible. |

Using the AttributeConverter entity property as a query parameter

Assuming you have the following entity:

Photo entity with AttributeConverter@Entity(name = "Photo")

public static class Photo {

@Id

private Integer id;

@Column(length = 256)

private String name;

@Column(length = 256)

@Convert(converter = CaptionConverter.class)

private Caption caption;

//Getters and setters are omitted for brevity

}And the Caption class looks as follows:

Caption Java objectpublic static class Caption {

private String text;

public Caption(String text) {

this.text = text;

}

public String getText() {

return text;

}

public void setText(String text) {

this.text = text;

}

@Override

public boolean equals(Object o) {

if ( this == o ) {

return true;

}

if ( o == null || getClass() != o.getClass() ) {

return false;

}

Caption caption = (Caption) o;

return text != null ? text.equals( caption.text ) : caption.text == null;

}

@Override

public int hashCode() {

return text != null ? text.hashCode() : 0;

}

}And we have an AttributeConverter to handle the Caption Java object:

Caption Java object AttributeConverterpublic static class CaptionConverter

implements AttributeConverter<Caption, String> {

@Override

public String convertToDatabaseColumn(Caption attribute) {

return attribute.getText();

}

@Override

public Caption convertToEntityAttribute(String dbData) {

return new Caption( dbData );

}

}Traditionally, you could only use the DB data Caption representation, which in our case is a String, when referencing the caption entity property.

Caption property using the DB data representationPhoto photo = entityManager.createQuery(

"select p " +

"from Photo p " +

"where upper(caption) = upper(:caption) ", Photo.class )

.setParameter( "caption", "Nicolae Grigorescu" )

.getSingleResult();In order to use the Java object Caption representation, you have to get the associated Hibernate Type.

Caption property using the Java Object representationSessionFactoryImplementor sessionFactory = entityManager.getEntityManagerFactory()

.unwrap( SessionFactoryImplementor.class );

final MappingMetamodelImplementor mappingMetamodel = sessionFactory

.getRuntimeMetamodels()

.getMappingMetamodel();

Type captionType = mappingMetamodel

.getEntityDescriptor( Photo.class )

.getPropertyType( "caption" );

Photo photo = (Photo) entityManager.createQuery(

"select p " +

"from Photo p " +

"where upper(caption) = upper(:caption) ", Photo.class )

.unwrap( Query.class )

.setParameter(

"caption",

new Caption( "Nicolae Grigorescu" ),

(BindableType) captionType

)

.getSingleResult();By passing the associated Hibernate Type, you can use the Caption object when binding the query parameter value.

3.2.51. Registries

We’ve covered JavaTypeRegistry and JdbcTypeRegistry a few times now, mainly in regards to mapping resolution

as discussed in Resolving the composition. But they each also serve additional important roles.

The JavaTypeRegistry is a registry of JavaType references keyed by Java type. In addition to mapping resolution,

this registry is used to handle Class references exposed in various APIs such as Query parameter types.

JavaType references can be registered through @JavaTypeRegistration.

The JdbcTypeRegistry is a registry of JdbcType references keyed by an integer code. As discussed in

JdbcType, these type-codes typically match with the corresponding code from

java.sql.Types, but that is not a requirement - integers other than those defined by java.sql.Types can

be used. This might be useful for mapping JDBC User Data Types (UDTs) or other specialized database-specific

types (PostgreSQL’s UUID type, e.g.). In addition to its use in mapping resolution, this registry is also used

as the primary source for resolving "discovered" values in a JDBC ResultSet. JdbcType references can be

registered through @JdbcTypeRegistration.

See TypeContributor for an alternative to @JavaTypeRegistration and @JdbcTypeRegistration for

registration.

3.2.52. TypeContributor

org.hibernate.boot.model.TypeContributor is a contract for overriding or extending parts of the Hibernate type

system.

There are many ways to integrate a TypeContributor. The most common is to define the TypeContributor as

a Java service (see java.util.ServiceLoader).

TypeContributor is passed a TypeContributions reference, which allows registration of custom JavaType,

JdbcType and BasicType references.

While TypeContributor still exposes the ability to register BasicType references, this is considered

deprecated. As of 6.0, these BasicType registrations are only used while interpreting hbm.xml mappings,

which are themselves considered deprecated. Use Custom type mapping or Compositional basic mapping instead.

3.2.53. Case Study : BitSet

We’ve covered many ways to specify basic value mappings so far. This section will look at mapping the

java.util.BitSet type by applying the different techniques covered so far.